Humans are a product of nature and nurture, meaning our phenotype (the composite of all outward, measurable, characteristics, including our health parameters) is a function of two things: our genotype (the DNA program in all cells) and the environment (all inputs to a human, like food and medicine). This arrangement is analogous to how the output of a program (such as a search engine) is a function of both the program and the input (keywords typed by a user). Using the same input with a different program (such as Google search vs. Bing) can result in different output. In this analogy, the role of the medical professional is to provide information that is “diagnostic” (such as, “Is there a bug in the program based on observed output?”), “prognostic” (such as, “Can output/outcome be predicted, given specific inputs, like diet?”), or “therapeutic” (such as, “Can a specific input, like a drug, lead to the desired output?”). Also, the electronic medical record (EMR) of a patient can be viewed as an archive of previously acquired inputs and outputs.

Key Insights

- Making genomics interactive is potentially transformative, similar to the shift from batch processing to time sharing.

- Analogous to Internet layering, genome processing can be layered into an instrument layer, an evidence layer, and an inference layer.

- A declarative query language we call GQL enables automatic optimization and provenance and privacy checks more readily than procedural alternatives used today.

Unlike computers, the human program is largely hidden. Hence, traditional medicine is “depersonalized,” with doctors providing treatment by comparing the patient’s phenotype (symptoms) against empirical observations of outputs from a large number of individuals. Limited customization is based on coarse classes, like “race.” All this changed with the sequencing of the human genome in early 2000 and the subsequent drop in costs from hundreds of millions of dollars to $1,000 on small desktop sequencing machines. The ability to cheaply read the program of each human underlies the great promise of personalized medicine, or treatment based on symptoms and the patient’s distinctive DNA program.

We frame this point with a classic example: The blood-thinner drug Warfarin is widely prescribed to prevent blood clots. Dosage is critical; too high and the patient can bleed to death, too low and the drug might not prevent life-threatening blood clots. Often, the right dosage is established through multiple visits to the clinic and regular testing. However, recent reports16 suggest that knowledge of the patient’s genetic program can help establish the right dosage. We outline this approach (genetic association and discovery workflow) in three steps:

Collect samples. Collect a sample of affected and “genetically matched” control individuals; then sample DNA and catalog variations;

Identify variations. Identify and report variations that co-segregate, or correlate, with the affected/control status of the individual; and

Follow up with studies. Follow up on the genetic basis of the correlation through costly studies and experiments in animal models and clinical trials; then transfer knowledge to the clinic.

Even with its success, the discovery approach involves complications: First, studies are resource-intensive, requiring identifying and sequencing large cohorts of individuals with and without a disease. Second, it is unclear how to apply study results to a specific individual, especially one genetically different from the investigated cohort. Finally, data reuse is difficult; significant computation is needed to dig out data from a previous study, and much care is required to reuse it. We contrast “discovery work flow” with “personalized medicine.” Here, a physician treating individual A may query a database for treatments suitable for patients with genetic variations similar to those of A or query for patients genetically similar to A for treatments and dosages that worked well for these patients.

The notion of computational tools enabling personalized medicine is gaining currency. Some groups have proposed specialized databases for cancer genomes,14 though details are still emerging. Here, we take a more general view, allowing for broader access to genomic information and enabling both discovery and personalized medicine.

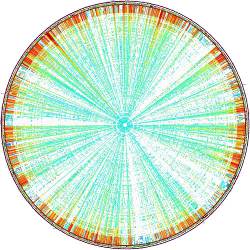

We start with a shift in perspective implicit in the personalized-genomics community. Instead of sequencing small numbers of people on an as-needed basis, we assume individuals are sequenced at birth and their personal genome is a part of their EMR, available to be queried by researchers and medical personnel. This scenario is realistic considering how quickly sequencing costs are falling. This shift in perspective enables personalized medicine and large-scale discovery (see Figure 1).

In choosing the Warfarin dosage for a patient, a medical team might identify a collection of individuals genetically similar to the patient and on a Warfarin regimen; query their genomes and EMRs for genetic variation in candidate genes and Warfarin dosage, respectively; and choose the appropriate dosage based on the patient’s specific genetic program. The ability to logically select from a very large database of individuals using the phenotype as a key removes the first problem with the discovery workflow. Using genetic variations specific to individual A as a key to return treatments (that work well for such variations) addresses the second problem. Finally, if the accompanying software system has good abstractions, then the third problem (computational burden to reuse data) is greatly eased. Here we focus on key software abstractions for genomics, suggesting that like other CS areas (such as VLSI/systems), software abstractions will enable genomic medicine.

We start with basic genetics using programming metaphors, then describe trends in sequencing and how genetic variations are called today and outline our vision for a vast genomic database built in layers; the key idea is the separation of “evidence” and “inference.” We then propose a language for specifying genome queries and end by outlining research directions for other areas of computer science to further this vision.

We limit our scope to genomics, ignoring dynamic aspects of genomic analysis (such as transcriptomics, proteomics expression, and networks). Genomic information is traditionally analyzed using two complementary paradigms: First, in comparative genomics, where different species are compared, most regions are dissimilar, and the conserved regions are functionally interesting.6,7 The second is population genomics, where genomes from a single population are compared under the baseline hypothesis that the genomes are identical, and it is the variations that define phenotypes and are functionally interesting. We focus on population genomics and its application to personalized medicine and do not discuss specific sequencing technologies (such as strobe sequencing vs. color space encoding).

Genetics for Computer Scientists

We start with a brief introduction to genetics for computer scientists; standard references (such as Alberts et al.1) provide more details. All living creatures consist of cells, each one like a computer running a program, or its DNA. The program uses three billion characters (nucleotides/base pairs, or bp) from a fixed alphabet {A, C, G, T}. Humans are diploid; that is, two programs control each cell, one inherited from the father and one from the mother. Further, each program is broken up into 23 “modules” called chromosomes, and within each chromosome are sparsely scattered small functional blocks called genes. The module pairs from the father and mother are called homologous chromosomes, with each human having a pair of (homologous) genes from each parent.

The “hardware” of the cell consists of cellular organelles and proteins—the cellular machinery. Proteins perform specific cellular functions (such as catalyzing metabolic reactions and transducing signals). The gene contains the “code” for manufacturing proteins, with each gene executing in one of many “ribosomes” (analogous to a CPU). Information travels from the nucleus (where the packaged DNA resides) to the ribosome via a “messenger” molecule (mRNA), essentially a copy of the coding DNA. The ribosome “reads” the code 3 bases (one codon) at a time; each codon is analogous to an OpCode instructing the ribosome to attach a specific amino acid to the protein sequence being constructed. The DNA program thus provides instructions for making the hardware that in turn performs all cellular functions.

The key to efficiency in genomics is the premise that an individual’s genetic record can be summarized succinctly by a much smaller list of individual genetic variations.

A change (mutation) in the DNA can change the amino acid and, correspondingly, the cellular machinery resulting in a different phenotype (output). In the Mendelian paradigm, each of the two homologous copies of the gene controls one phenotypic trait (such as ability to clot blood). A mutation in one gene might affect the phenotype strongly (dominant), not at all (recessive mutation), or somewhere in between. Most phenotypes are complex, controlled by the paired copies of multiple genes. Nevertheless, DNA controls traits, so even the simplest queries on DNA are useful (such as “Compared to a ‘normal’ individual, have parts of the patient’s DNA program mutated?”).

Advances in sequencing have made it possible to cheaply scan an individual’s genetic program for mutations, or variations. First, a physical process is used to randomly shear genomic DNA into small inserts of size 500bp10,000bp. The sequencing machine deciphers the DNA from small fragments, or reads (length L ≈ 100bp) at one or both ends of the inserts. The genomic information is thus presented as a collection of small strings over A,C,G,T sampled from a random location on a (maternal or paternal) chromosome. It is natural to assume these fragments will be assembled like a giant jigsaw puzzle. However, such an assembly is complex and computationally expensive due to the large amount of repetitive portions in human genomes.

Mapping and variation. An alternative to assembly is to align, or map, the non-repetitive fragments of a sampled genome (the donor/patient genome) to a reference human genome. The current reference is a single (haploid) copy of each chromosome sampled from multiple individuals. Mapping involves finding a location on the reference where the genomic substring matches the query fragment up to a small number of errors. The errors might be sequencing errors or true variations in the query relative to the reference. Mapping works because string search is more tractable than assembly and any pair of human genomes is identical to one in 1,000 locations.

A true deviation in the donor sequence relative to the reference is called a variation. The simplest variation is a single nucleotide (or single character) variation (SNV). Recall that a donor genome consists of two copies; a variation that occurs in both copies is called homozygous, and a variation in only one copy is called heterozygous. The specific value of the variant is called an allele; for example, suppose the DNA at homologous chromosomes of individual A compared to the reference is

…ATG…GAGTA… Reference Assembly

…ACG…GAGTA… Maternal chromosome 1

…ATG…GAGCA… Paternal chromosome

Individual A is bi-allelic, or heterozygous, at the two SNV sites and has the genotype …C/T…C/T…, and the genotypes are resolved into two haplotypes …C…T…, …T…C…

Sites containing SNVs that are prevalent in a population demarcate chromosomal positions as varying, or polymorphic. Consequently, these locations are called single nucleotide polymorphisms (SNPs). In discovery workflows, geneticists test populations to see if the occurrence of variation correlates, or associates, with the phenotype status of the individual.

So far we have discussed simple variations involving one or a small number of changes at a location. By contrast, geneticists also investigate structural variations in which large (1kbp up to several million bases) genomic fragments are deleted, inserted, translocated, duplicated, or inverted, relative to the reference.19

Sequencing Trends

Four technological trends are relevant for designing a genomic software architecture:

Reduced cost. While the Human Genome Project (http://www.genome.gov/) cost $100 million, human re-sequencing for redundant (15x) coverage now costs less than $5,000 in the U.S., projected to fall below $1,000. This implies universal sequencing may be realizable, and archiving and analysis, not sequencing, will dominate cost;

Short read lengths. New sequencing technologies largely sacrifice length and sequence quality to massive parallelism and high throughput. As “single-molecule” sequencing technologies emerge, reads may become long enough (~100Kbp) to allow de novo assembly. Nevertheless, raw reads will continue to be first-class entities;

Prohibitive assembly costs, paired-end sequencing. Repetitive regions cover 40% of the human genome. If the read length is shorter than the length of repetitive sequence, the genome cannot be assembled uniquely. Longer reads or reads sequenced from the ends of long clones (paired end reads) are necessary for resolving repeats and assembling sequences de novo. Sequenced reads are today mapped to a standard human reference to identify variations correlated to phenotype variation; and

Computer system costs. Some studies15,18 have shown the cost of disk storage for genomes is now greater (and decreasing more slowly) than the cost of sequencing.

We begin with exemplar queries on genomic data that illustrate the difficulty of genomic analysis and lack of consensus as to a best method. Abstractions must be flexible enough to handle a variety of methods.

Variation Calling

The key to efficiency in genomics is the premise that an individual’s genetic record can be summarized succinctly by a much smaller list of individual genetic variations. While we develop this premise further in our layering proposal, we provide insight as to how variants are called today; the expert should skip this section and proceed to our layering proposal. We start with querying for SNVs in the donor genome, the simplest form of variation:

Calling SNVs. Figure 2 outlines how a mutation may be called. Consider the reference allele C. We see two copies of the donor genome with a G allele and some copies with a C, indicating a heterozygous SNV. If the variation is homozygous, all overlapping reads would be expected to have a G in that position, though even this simple call can be confounded. Some reads may have been mapped to the wrong place on the reference (such as the top donor read in the figure). The G/T mutation may not be correct, and the alignment of the incorrectly mapped read might present many variations. Even if the read is mapped correctly, sequencing errors could incorrectly appear as heterozygous mutations.

Mutation callers use statistical methods informed by mapping the quality of the read (such as number of potential places in the genome a read can map to), the quality score of a base call, and the distribution of bases or alleles in the reads for that location. Some mutation callers use evidence based on the surrounding locations (such as an excess of insertion/deletion events nearby suggesting alignment problems). The decision itself could be based on frequentist, Bayesian inference, or other machine-learning techniques. While SNP callers use various inference techniques, all refer to the same evidence—the set of reads overlapping the location of the SNP in question.

Calling structural variations. In addition to small nucleotide changes, larger structural variations involving insertion, deletion, and translocation of large genomic regions are another important source of genomic variation. Consider the example of deletions in the donor genome (see Figure 2b) in which a large segment of DNA is deleted, relative to the reference. If both copies of the donor genome are deleted, the deletion is homozygous; otherwise, it is heterozygous deletion. Deletions are detected through several techniques:

Paired-end mapping. Paired-end sequencing sequences both ends of large genomic fragments (sampled randomly from the donor genome). These fragments are size-selected to be tightly distributed around a specified length L(≈500). If paired reads end up mapping much further apart than L (length discordance), a geneticist can infer a deletion in the donor relative to the reference (such as read “a” in Figure 2b). If the deletion is heterozygous, the geneticist would see a mix of concordant and discordant reads at the breakpoints of the deletion.

Depth of coverage. “Depth” at a position refers to the count of reads mapped to the position. Deleted regions of the donor chromosome will have reduced coverage—roughly half for heterozygous deletions and zero for homozygous ones. Thus, read “b” in Figure 2b maps within the deleted region, but reads “a” and “c” do not.

Single-end mapped and split-reads. When a read maps to the breakpoint of the deletion on the donor it cannot be mapped back to the reference (Figure 2b, read “c”). In the case of a “clean” deletion, the prefix and suffix of the fragment can be mapped separately; such split-reads are indicative of deletion events.

Loss of heterozygosity. Consider the SNV locations on the donor genome. While sampling multiple polymorphic sites, a geneticist would expect a mix of heterozygous and homozygous sites. At a deletion, the single chromosome being sampled displays a loss of heterozygosity.

Even within the constraints of these four categories, a number of design decisions must be made by software tools to account for repetitive sequences and to reconcile conflicting evidence. Variant inference remains a challenging research problem.

Layering for Genomics

Our vision is inspired by analogy with systems and networks; for example, the Internet has dealt with a variety of new link technologies (from fiber to wireless) and applications (from email to social networks) via the “hourglass” model using the key abstractions of TCP and IP (see Figure 3a).

Similarly, we propose that genomic-processing software be layered into an instrument layer, a compression layer, an evidence layer, an inference layer, and a variation layer that can insulate genomic applications from sequencing technology. Such modularity requires computer systems to forgo efficiencies that can be gained by leaking information across layers; for example, biological inferences can be sharpened by considering which sequencing technology is used (such as Illumina and Life Technologies), but modularity is paramount.

Some initial interfaces are in vogue among geneticists today. Many instruments now produce sequence data in the “fastq” format. The output of mapping reads is often represented as “SAM/BAM” format, though other compressed formats have been proposed.10 At a higher level, standards (such as the Variant Call Format, or VCF) are used to describe variants (see Figure 3a).

GQL also supports multiple types of inference, changing definitions of variation and pooling evidence across instrument types.

We propose additional layering between the mapped tools and applications. Specifically, our architecture separates the collection of evidence required to support a query (deterministic, large data movement, standardized) from the inference (probabilistic, comparatively smaller data movement, little agreement on techniques). While inference methods vary considerably, the evidence for inferences is fairly standard. To gather it in a flexible, efficient manner, we propose a Genome Query Language (GQL). Though we do not address it here, a careful specification of a variation layer (see Figure 3a) is also important. While the data format of a variation is standardized using, say, VCF, the interface functions are not.

The case for an evidence layer. Genomes, each several hundred gigabytes long, are being produced at different locations around the world. To realize the vision outlined in Figure 1, individual laboratories must be able to process them to reveal variations and correlate them with medical out-comes/phenotypes at each place a discovery study or personalized medicine assay is undertaken. The obvious alternatives are not workable, as described in the following paragraphs:

Downloading raw data. Transporting 100Gb for each of 1,000 genomes across the network is infeasible today. Compression can mitigate (5x) but not completely avoid the problem. Massive computational infrastructure must be replicated at every study location for analysis.

Downloading variation information. Alternatively, the genomic repositories could run standard-variant-calling pipelines4 and produce much smaller lists of variations in a standard format (such as VCF). Unfortunately, variant calling is an inexact science; researchers often want to use their own callers and almost always want to see “evidence” for specific variants. Discovery applications thus very likely need raw genomic evidence. By contrast, personalized genomics applications might query only called variants and a knowledgebase that correlates genotypes and phenotypes. However, even medical personnel might occasionally need to review the raw evidence for critical diagnoses.

Our approach provides a desirable compromise, allowing retrieval of evidence for variations on demand through a query language. The query server itself uses a large compute (cloud) resource and implements a query interface that returns the subset of reads (evidence) supporting specific variations. Some recent approaches have indeed hinted at such an evidence layer, including SRA and Samtools, but in a limited scenario useful mainly for SNV/SNP calling. The Genome Analysis Toolkit (http://www.broadinstitute.org/gatk/) provides a procedural framework for genome analysis with built-in support for parallelism. However, our approach—GQL—goes further, allowing declarative querying for intervals with putative structural variation (such as with discrepant reads supporting a deletion) or copying number changes. GQL also supports multiple types of inference, changing definitions of variation and pooling evidence across instrument types.

Consider this complex biological query: Identify all deletions that disrupt genes in a certain biological network and the frequency of the deletions in a natural population. For any statistical-inference algorithm, the evidence would consist of mapped reads that satisfy certain properties, including: length-discordant reads; reads with reduced depth of coverage; and reads with one end unmapped. The evidence layer supports queries to get those reads and delivers the following benefits:

Alternate forms of evidence. The separation allows inference-layer designers to start thinking of alternate forms of evidence to improve the confidence of their queries (such as split-end reads that map to the deletion breakpoints);

The cloud. The evidence layer can be a data bottleneck, as it involves sifting through large sets of genomic reads. By contrast, the inference layer may be compute-intensive but typically works on smaller amounts of data (filtered by the evidence layer). The evidence layer can be implemented in the cloud, while the inference layer can be implemented either in the cloud or on client workstations; and

Moving target. A standardized evidence layer gives vendors time to create a fast, scalable implementation; by contrast, the inference layer is today a moving target.

Here, we develop this intuitive idea further by describing GQL, which is being developed to support the evidence layer:

Querying the Genome via GQL

We wanted to develop a query language that is complete (capable of handling all evidence level queries), efficient, and easy to express, and that uses standard input/output. Ideally, the language would allow selecting from a set of reads we call READS and output a subset of reads in a standard format, like BAM. GQL uses a standard SQL-like syntax that is easy to use and familiar to most programmers. However, a standard relational database does not work well.

GQL includes two fundamental types of relations: Reads mapped to the human genome often expressed as BAM files and tables of intervals representing “interesting” (functional) regions of the genome. While simple selection queries are exactly the same as in relational languages (select from reads where mapped pairs are far apart), many useful queries require joining relations using interval intersection, not equality as the join operator; for example, a geneticist might want to join a relation consisting of READS that map to gene exons, the gene regions that translate to proteins, but where the paired ends are far apart, indicating a deleted exon (see Figure 4).

GQL defines a special MapJoin operator to allow this, and some of the newer sequencing technologies allow multiple reads to be generated from the same physical clone. While not explicitly discussed here, the relations can be extended to this case. We also find it extremely helpful to have a third operator we call “project interval” to compute the largest continuous interval containing a merged representation of what may be a number of smaller intervals; example queries using these operations are outlined in Figure 4.

Sample queries. GQL is based on a genome query algebra, and, here, we discuss several examples of its expressive power. In a companion technical paper (to be published elsewhere), we show GQL’s expressive power captures the language of first-order logic over the relations discussed earlier, as well as a signature of aggregation functions.

What is the genotype at a specific position (such as SNV)?

Query. Define an interval by the triple <‘chr’, ‘beg’, ‘end’>, signifying the beginning and ending coordinates on a specific chromosome. Let the SNV of interest be located at a point interval A (<chr, beg=i, end=i>). The evidence for the genotype is provided by alignments of reads that map to the location; we can either query for the mapped reads or for the alignments themselves, which are often stored as a mapped read attribute (such as R.ALIGNSTR). Thus

What are the diploid haplotypes (phased genotypes) across a set of linked loci in a dataset?

Query. This query is more challenging than the first. Assembling haplotypes requires a collection of reads, each (perhaps along with their paired-end reads) connecting at least two polymorphic sites. Let attribute R.CloneId denote the clone identifier so the paired-end reads r1,r2 derived from the same clone satisfy r1.CloneId = r2.CloneId. Also, let relation S denote the collection of point intervals, one for each variant locus.

- Find a subset of reads mapping to the loci and the count of sites the reads or their paired-ends map to (call it count c)

- Return IDs of reads with count ≥2

What genomic loci are affected by copy number variations (CNVs)?

Query. If the number of donor reads mapping to a region exceeds some threshold T then the inference might be the region has been duplicated in the donor genome. Such CNVs have been implicated as an important variation for many disease phenotypes. To gather evidence, a geneticist would look to identify all intervals where the number of mapped reads exceeds, say, threshold t. Let G.loc denote a specific chromosome and location.

- Compute for each location the number of reads that map to the location

- Return all “merged regions” where the read count exceeds threshold t

Identify all regions in the donor genome with large deletions.

Query. As discussed earlier, the evidence for deletion comes from several sources. Suppose a user prefers discrepant paired-end mapping. Paired-end reads from clones of, say, length 500 should map ≈500bp apart on the reference genome. If, instead, the ends happen to map discrepantly far (such as l apart for some l >> 500, like l ≈ 10,000), they support the case for a deletion in the donor genome. The goal is to identify all regions with at least t discrepant paired-end reads:

- Use a join in which each record contains the mapping locations of the read, as well as its paired-end.

- Select records containing discrepant reads.

- Select intervals containing at least t discrepant reads.

Population-based queries. The true power of querying genomes comes from the ability to query populations. Indeed, existing tools (such as Samtools) support the ability to extract reads from multiple individuals at specific locations corresponding to polymorphic sites. GQL extends the full power of genomic queries to interrogate populations. In the Warfarin example, the goal was to query for Warfarin dosage and genetic variation in candidate genes (identified through a discovery work flow) among individuals on the Warfarin regimen. This suggests the following query: “Report Warfarin dosage and genomic intervals and reads in individuals such that the copy number of mapped reads is at least twice the expected coverage in the interval.”

This query would be similar to that of a single individual but repeated via a “join” with a population P, using

Using earlier arguments, GQL can be used to count the read depth and report high-CNV regions. A similar idea applies to a personalized workflow, where a geneticist might be interested in patients with copy numbers similar to the specific query individual.

Group inference without accurate individual inference. The ability to query populations has an important benefit: Individual genomes may have little evidence for SNV calls at low-coverage sequencing. However, if a large number of affected individuals in a population (such as 800 out of 1,000) all show the same SNV while controls do not, an inference tool can reliably predict an association, even with unreliable calls for individuals. While more work is required to demonstrate the benefits of group inference, the point is that GQL provides query support for group inference.

Prototype Implementation

We have developed a prototype implementation of a subset of GQL (see Figure 5). The uploaded genome (in BAM format) can be queried through a simple text interface that allows the user to write a GQL query. This query is compiled and executed and the output returned as a (smaller) BAM file that can be visualized through appropriate genome browsers (such as jbrowse, http://jbrowse.org/) or downloaded to the client for further analysis.

The implementation of “genome-query” has a customized parser that converts GQL to an intermediate representation. We included customized procedures for each of the algebraic operations, with some concessions for efficiency, mainly with respect to memory. Specifically, GQL uses interval trees to implement joins and customized indices (such as strength vectors) for efficient querying.

Challenges

We have made the case for a set of genomic layers, including an evidence layer where evidence is retrieved through GQL. Successful implementation of this vision depends on new ideas from computer science:

Query power (database theory). Is GQL sufficiently powerful to address all evidence-layer queries needed in practice? The goal is to have the evidence layer handle as much data-intensive computation as possible while preserving performance; without the performance goal, any query can be trivially satisfied by passing the entire genome to the inference layer. Note that GQL’s expressive power coincides with that of first-order logic over the schema of the three relations R, G, P, a signature of aggregation functions, and a group-by operator. However, user feedback may require GQL developers to add extensions, and, in implementing extensions, care must be taken to balance expressive power with efficient evaluation.

Query speed (database systems). We designed a corresponding algebra GQA as an internal representation for optimization and for evaluating query plans; for example, assume queries on populations are automatically decomposed into queries on individuals. Consider queries of the general form SELECT α FROM MAPJOIN R, G WHERE b. Two steps are needed to construct such a query:

- Select for relations that satisfy constraints b; and

- Project (while removing duplicates) onto attributes α.

GQL uses a location-based index LTOR, where LTOR(l) is a pointer to first read that maps to the point-interval l. For each individual, GQL keeps a compressed index of the mapped reads in memory. The index can be used for select operations based on specific locations (such as reads that map to specific genes).

The true power of querying genomes comes from the ability to query populations.

However, many queries involve scanning the entire genome for maximal intervals; for example, find all maximal regions where there is a disproportionate increase in the number of mapped reads (high copy number). For efficient implementation of these queries, GQL constructs special indices that allow filtering for reads according to a user-defined constraint. Define a strength-vector SΘ for a constraint Θ as a vector of length G (the entire genome). Any location l

G, SΘ [l] gives the strength of the evidence at that location and can be pre-computed for common constraints Θ. To reduce memory, GQL also chooses a minimum strength cut-off and maintains CΘ,t as a sorted sequence of intervals i1,i2,… such that each ij is a maximal interval satisfying SΘ[l]≥t for all l

G, SΘ [l] gives the strength of the evidence at that location and can be pre-computed for common constraints Θ. To reduce memory, GQL also chooses a minimum strength cut-off and maintains CΘ,t as a sorted sequence of intervals i1,i2,… such that each ij is a maximal interval satisfying SΘ[l]≥t for all l

ij. The compressed vectors reduce memory and computation requirements and can be recomputed on demand.

ij. The compressed vectors reduce memory and computation requirements and can be recomputed on demand.

EMRs (information retrieval). The phenotypes associated with each sequenced individual are already in patient medical records. Initial results from the eMERGE network indicate that, for a limited set of diseases, EMRs can be used for phenotype characterization in genome-wide association studies within a reasonable margin of error.9,13 We anticipate that most health-care institutions will be using EMRs by 2014, given incentives provided by the Health Information Technology for Economic and Clinical Health Act of 2009.17 Increasing adherence to interoperability standards5 and advances in biomedical natural language processing12 make efficient querying possible. However, there is no integration of genotype and phenotype data today. GQL should be useful for both interrogating a single genome and interrogating multiple genomes across groups of individuals but will need to integrate with existing EMR systems so phenotype data can be queried together with genomes.

Privacy (computer security). The genome is the ultimate unique identifier. All privacy is lost once the public has access to the genome of an individual, but current regulation, based on the Health Information Portability and Accountability Act, is silent about it.2,3,11 Though the Genetic Information Nondiscrimination Act addresses accountability for the use of genetic information8 privacy laws must change to ensure sensitive information is available only to the appropriate agents. Checking that a given study satisfies a specific privacy definition requires formal reasoning about the data manipulations that generated the disclosed data—impossible without a declarative specification (such as GQL) of such manipulations.

Provenance (software engineering). GQL is an ideal way to record the provenance of genomic study conclusions. Current scripts (such as GATK) often consist of code that is too ad hoc for human readability and span various programming languages too low level for automatic analysis. By contrast, publishing the set of declarative GQL queries along with their results would significantly enhance the clarity and reproducibility of a study’s claims.

While the work is a challenge, making genetics interactive is potentially as transformative as the move from batch processing to time sharing.

Provenance queries also enable scientists to reuse the data of previously published computing-intensive studies. Rather than run their costly queries directly on the original input databases, these scientists would prefer to launch an automatic search for previously published studies in which provenance queries correspond to (parts of) the computation needed by their own queries. The results of provenance queries can be directly imported and used as partial results of a new study’s queries, skipping re-computation. This scenario corresponds in relational database practice to rewriting queries using views.

Scaling (probabilistic inference). Learning the correlation between diseases and variations can be tackled differently if there are a large number of genomes. It may be less critical to accurately evaluate individual variations for such a discovery problem, as erroneous variations are unlikely to occur over a large group of randomly selected individuals. More generally, do other inference techniques leverage the presence of data at scale? As an example, Google leverages the big-data collections it has to find common misspellings. Note that accurately screening individual variations is still needed for personalized medicine.

Crowd sourcing (data mining). Crowdsourcing might be able to address difficult challenges like cancer,14 but the query system must first have mechanisms to allow a group to work coherently on a problem. Imagine that a group of talented high-school science students are looking for genetic associations from cases and controls for a disease. A potentially useful GQL mechanism would be to select a random subset of cases and controls that are nevertheless genetically matched (arising from a single mixing population). Researchers could then query for a random subset of 100 individuals with a fraction of network bandwidth while still providing similar statistical power for detecting associations.

Reducing costs (computer systems). Personalized medicine must be commoditized to be successful so requires computer systems research; for example, since most genomes are read-only, is there some way to leverage solid-state disks? Efficient decomposition between the cloud and a workstation is key to reducing data traffic in and out of the cloud. While genomics has been dominated by costly parallel computers, it makes economic sense to adapt genomic software to harness the parallelism in today’s cheap multicore CPUs.

Conclusion

Genomics is moving from an era of scarcity (a few genomes with imperfect coverage) to abundance (universal sequencing with high coverage and cheap re-sequencing when needed). This shift requires geneticists and computer scientists alike to rethink genomic processing from ad hoc tools that support a few scientists to commodity software that supports a world of medicine. The history of computer systems teaches us that as systems move from scarcity to abundance, modularity is paramount; ad hoc software must be replaced by a set of layers with well-defined interfaces. That these trends have been recognized by industry is seen in the shift from machine-specific formats (such as Illumina) to standards (such as BAM) and from vendor-specific variant formats to VCF. The 1000 Genomes Project (http://www.1000genomes.org/) has gained momentum, with a large number of sequences accessible today. However, much progress has involved defining data formats without powerful interface functionality; using an Internet analogy, it is as if TCP packet formats were defined without the socket interface.

We propose going beyond the layering implicit in current industry standards to enabling personalized medicine and discovery. We advocate separation of evidence from inference and individual variation from variation across groups, as in Figure 3a. We propose a specific interface between the evidence layer and the inference layer via the GQL. While GQL is based on a relational model using a virtual interval relation, further development is required beyond standard relational optimization to allow GQL to scale to large genomes and large populations.

Here, we described several benefits from separating evidence from inference; for example, a genome repository accessible by GQL offers the ability to reuse genomic data across studies, logically assemble case-control cohorts, and quickly change queries without ad hoc programming. GQL also offers the ability to reduce the quality of inference on an individual basis when applying group inference over large populations. We also described simple ideas for scaling GQL to populations using compressed-strength indices and for doing evidence-layer processing in the cloud.

We emphasized that GQL and the evidence layer are only our initial attempt at capturing abstractions for genomics. We hope to prompt a wider conversation between computer scientists and biologists to tease out the right interfaces and layers for medical applications of genomics. Beyond abstractions much work remains to complete the vision, including better large-scale inference, system optimizations to increase efficiency, information retrieval to make medical records computer readable, and security mechanisms. While the work is a challenge, making genetics interactive is potentially as transformative as the move from batch processing to time sharing. Moreover, computer scientists only occasionally get a chance to work on large systems (such as the Internet or Unix) that can change the world.

Acknowledgments

This work was funded in part by the National Institutes of Health-sponsored iDASH project (Grant U54 HL108460), NIH 5R01-HG004962, and a Calit2 Strategic Research Opportunity scholarship for Christos Kozanitis. We thank Rajesh Gupta, Vish Krishnan, Ramesh Rao, and Larry Smarr for their useful discussions and support.

Figures

Figure 1. Universal sequencing, discovery, and personalized medicine.

Figure 1. Universal sequencing, discovery, and personalized medicine.

Figure 2. Evidence for variation in the donor.

Figure 2. Evidence for variation in the donor.

Figure 3. Layers of genomic processing software.

Figure 3. Layers of genomic processing software.

Figure 4. MapJoin and ProjectInterval operations.

Figure 4. MapJoin and ProjectInterval operations.

Figure 5. Prototype implementation of GQL, with visualization using the open-source tool jbrowse; included are discordant paired-end reads supporting deletions.

Figure 5. Prototype implementation of GQL, with visualization using the open-source tool jbrowse; included are discordant paired-end reads supporting deletions.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment