The waters off the west coast of Scotland are lined with once rich, complex coral reefs. Over the years, bottom-trawling fishermen have all but ruined them, leaving the coral broken and displaced. Divers have begun working to repair the damaged reefs, but in some places they lie more than 650 feet below the surface, far too deep for a standard scuba diver. So, in August, a group of researchers at Heriot-Watt University in Edinburgh announced plans to build a fleet of underwater robots to do the job. These so-called coralbots will be able to work at greater depths than human divers and stay down for longer periods. The coralbots will work cooperatively, identifying dead chunks of the reef via high-resolution cameras, using the lifeless fragments to rebuild the inner structure, then stacking still-living corals on the outside.

Despite the complexity of their task, the robots themselves will not be all that bright, according to Heriot-Watt computer scientist David Corne. “You could rebuild the reefs without particularly complex planning or intelligence in the robots,” he says. Corne points to ants, termites, and wasps as examples. Each of the individual creatures follows a series of simple rules as they react to patterns in their environment, but as a group they can produce fantastic structures. “A termite mound has chambers and a whole ventilation network,” he notes. And yet it is built by a collection of simple intelligences.

Although Corne points to termites and wasps as proof the concept could work, he could just as easily cite the advances in numerous robotics labs across the world. Researchers have been interested in the notion of robots guided by swarm-type intelligence for decades, but in the last few years these collectives have become reality. Scientists have demonstrated more than a hundred robots working together at a time, and within the next year they hope to push the population of a controlled swarm past 1,000. “It’s unreal what we can do as a community compared to just five years ago,” says Magnus Egerstedt, a roboticist at the Georgia Institute of Technology in Atlanta. “There had been lots of simulated swarm robotics, but actually seeing 50 robots reliably doing things together now is not out of the question, and five years ago it was.”

Follow No Leader

Several years ago, while in Budapest, Hungary, for a conference, Egerstedt met a shepherd, and fell into a conversation about the role of the herding dog in directing a flock. Egerstedt himself had long been puzzled by a theoretically related question. “If you’re surrounded by a million robot mosquitoes, and you have a joystick, what do you actually do with the joystick?” he asks. “How should you interface with the swarm?” To him, the interaction of the shepherd with the herding dog seemed like the answer. “It seemed like a really natural way of thinking about human-swarm interactions.”

Back in Atlanta, Egerstedt planned an experiment to test the idea. He recruited test subjects to see how they would fare in directing a group of 25 simple, wheeled robots around the floor of his lab. Each participant was given a joystick and asked to accomplish a number of relatively simple tasks, such as manipulating one of the robots to arrange the others in a circle or a wedge shape. The robots were programmed to react to one another, so the follow-the-leader technique seemed like it would work. Yet the participants failed miserably. “People were overall quite pathetic at it,” Egerstedt recalls.

As a result, Egerstedt moved away from this leader-based interaction to a more democratic approach. “If you’re surrounded by a million mosquitoes, you’re probably not going to pick a key mosquito and start dragging it around,” he acknowledges now. “You’d probably wave around in the air and try to get them to move around in some pattern.”

Egerstedt is now designing a follow-up experiment in which the robots move around within a network of wireless routers. This time, participants will be given a motion-capture wand and asked to accomplish similar tasks with the robots. The difference is that the wand will create flows instead of directing a particular robot. When a user waves the wand in one area of the network, the nearby routers will track that motion, and, as robots pass nearby, the routers will tell them to move the same way. “Then the routers will also talk to each other in a distributed way and figure out how to conduct traffic,” he says.

Dispensable Machines

Although Egerstedt is excited about the potential of what he calls swarm conducting, he is also quick to point out the amazing work going on in other labs around the world. One oft-cited example of he possibilities of swarm-controlled machines is the work of roboticist Vijay Kumar and his team at the University of Pennsylvania. Kumar’s group has developed small quadrotors that fly in sync like flocks of birds. The robots vary pitch, roll, and yaw by adjusting the speed of their rotors, and roughly 100 times per second they calculate their position relative to one another and communicate their coordinates via radio.

Currently, the robots rely on a motion capture system in the lab that creates and updates a detailed map of the environment for each quadrotor. This allows the robots to remain small and light, since they do not have to carry bulky onboard sensors to do the mapping work themselves. But Kumar’s team has also demonstrated a larger flying robot outfitted with sensors that autonomously creates these virtual representations of the surrounding space. He says he can envision these robots exploring areas off-limits to humans. “Our interests are in search and rescue,” he says. “I envision a day when robots are the first ones on the scene.”

The robots will be able to function in dangerous environments in part because they are designed to be expendable. “The role of individual robots will be minimized,” Kumar explains. “The idea of one superior unit to control everybody is not necessary.” In fact, he says, you have to assume that a percentage of the robots will be lost in dangerous environments. The algorithms that control them need to be built with this in mind. “You need to make sure that the algorithms will work independent of the number of units.”

Expanding the Swarm

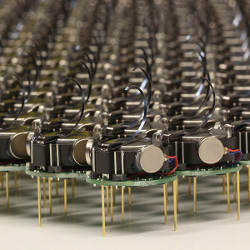

The need to push those algorithms is part of what drove Harvard University roboticist Mike Rubenstein to construct the Kilobot, an inexpensive robot no wider than a quarter that moves around on three simple, vibrating stick legs. Rubenstein’s work is linked to Harvard’s Robobees project—an NSF-funded multiyear effort to build a swarm of autonomous robotic bees that may eventually be able to pollinate areas like living bees do.

“Our interests are in search and rescue,” Kumar says. “I envision a day when robots are the first ones on the scene.”

The Kilobots offer a way to test the same algorithms that will guide the bees, but in a simpler, cheaper, grounded machine. If the goal is to develop control algorithms that can handle true swarms, Rubenstein reasoned, then those algorithms need to be tested at scale. Software that effectively guides the actions of a few dozen robots could break down when dealing with a few thousand. So Rubenstein designed the cheapest mobile robot he could, a squat, cylindrical machine with an infrared sensor and transmitter and, in all, less than $15 in parts. For the last few months he has been assembling his swarm with a goal of reaching 1,000 early this year.

In the meantime, Rubenstein and his collaborators have also demonstrated many insect-like behaviors such as navigation and foraging collective transport on swarms of up to 100 robots. Like Kumar, he views the individual as dispensable. “Everything you need to do can be done on the group level, not on the individual robot level,” he says. “You give them a program and they run it. User interaction is not necessary.” Still, if a human observer wanted to switch the swarm’s task midstream, it would be possible to communicate that change via a central controller. This central controller would not actively mediate the actions of the robots. It would merely set them to work, or pause their actions and send them new directives when necessary.

The insights gleaned from developing control systems for robots could also be transferred to other fields, according to Alcherio Martinoli, a roboticist at the Swiss Federal Institute of Technology in Lausanne. “You can use them as a testbed for fine-tuning certain methods and then you can transport the methods,” he says. For example, Martinoli and his colleagues are exploring how their algorithms might assist a flight traffic control system should our future skies become overcrowded with piloted and unmanned planes.

Still, it is the direct applications, and the tiny machines themselves, that draw so much attention to the field of swarm robotics. Martinoli has explored using swarms to inspect complex industrial equipment such as jet turbines. Kumar’s robots could scour dangerous disaster zones or crumpled buildings for survivors. Harvard’s Justin Werfel, a colleague of Rubenstein, is leading a project, TERMES, that aims to create a fleet of cooperative construction robots. Werfel envisions these machines building bases in extreme locales such as the deep sea or the Moon. With each application, numerous technological hurdles remain, but experts are quick to note that the basic idea of a swarm of relatively unintelligent, independent agents working together to achieve a complex goal is not a stretch at all. “We know it can be done because we see it happening in nature,” Werfel says.

Further Reading

Bonabeau, E.

Swarm Intelligence: From Natural to Artificial Systems, Oxford Univ., 1999.

Kushleyev, A., Mellinger, D. and Kumar, V.

Towards a swarm of nanoquadrotors; http://www.youtube.com/watch?v=YQIMGV5vtd4

Martínez, S., Cortés, J. and Bullo, F.

Motion coordination with distributed information, IEEE Control Systems Magazine, Aug. 2007.

Olfati-Saber, R., Fax, J.A. and Murray, R.M.

Consensus and cooperation in networked multi-agent systems, Proceedings of the IEEE, Jan. 2007.

Rubenstein, M., Ahler, C. and Nagpal, R.

Kilobot: A low cost scalable robot system for collective behaviors, IEEE Intl. Conf. on Robotics and Automation, 2012.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment