Over the last few years, data-intensive machine-learning techniques have made dramatic strides in speech recognition and image analysis. Now these methods are making significant advances on another long-standing challenge: translation of written text between languages.

Until a couple of years ago, the steady progress in machine translation had always been dominated by Google, with its well-supported phrase-based statistical analysis, said Kyunghyun Cho, an assistant professor of computer science and data science at New York University (NYU).

However, in 2015, Cho (then a post-doc in Yoshua Bengio’s group at the University of Montreal) and others brought neural-network-based statistical approaches to the annual Workshop on Machine Translation (WMT 15), and for the first time, the “Google translation was not doing better than any of those academic systems.”

Since then, “Google has been really quick in adapting this (neural network) technology” for translation, Cho observed. Based on its success, last fall Google began replacing the phrase-based system it had used for years, starting with some popular language pairs. The new system is “amazingly good, and it’s all thanks to neural machine translation,” Cho said.

Data posted by Google in November 2016 show that its new system is now essentially as good as human translators, at least for translation between European languages. (Google declined to be interviewed for this story.)

Neural Networks

The architectures that enable these advances, as well as the methods for configuring them, are fundamentally similar to those developed in the late 1980s, says Yann LeCun, director of AI research at Facebook and a professor at NYU. These systems employ multiple layers of simple elements that are extensively interconnected like those in the brain, inspiring the name “neural networks.” Individual elements typically combine the outputs of many other elements with variable weights, and then use a nonlinear threshold function to determine their own output.

“To solve a complicated problem, especially if we start with very low-level sensory information, we need to have a very high level of abstraction,” said Cho. “To get that kind of higher-level abstraction, it turns out we need to have many layers of processing.”

In analyzing an image, for example, initial layers might compare nearby pixels to identify lines or other primitive features, while deeper layers flag progressively more complex combinations of features, until the final object is classified as, say, a cat or a tank.

The specific analysis a particular network performs depends on the numerical weights assigned to each interconnection and on the details of how their combined output is computed. The challenge is to set the parameters for a particular task by exposing the system to a large series of inputs whose desired outputs are known, and sequentially adjusting the parameters to reduce any discrepancies.

After such “training” with known examples, the network can quickly extract high-level information from a novel low-level representation. With a large enough set of examples, this training can be done without even specifying which features are needed for classification.

Deep Learning

Neural networks are often described as “deep learning.” The phrase encompasses systems that perform more complex functions than traditional “neurons,” but it also sidesteps the somewhat-checkered reputation of neural networks.

Several ingredients help explain neural networks’ recent renaissance. First, hardware is vastly superior to that available 30 years ago, including commercial graphical processing units (GPUs) for rapid calculations. “That really brought a qualitative difference in what we can do,” LeCun said. Google has developed hardware it calls tensor processing units, which it says are needed for the rapid results that Web users expect.

Most translation implementations employ an encoder network and a decoder network that are trained as a pair for each choice of source and target language.

A second enabler for deep learning is that researchers can now access enormous amounts of data. Such large data sets, gleaned from our increasingly digital lives, are critical for nailing down the many parameters of deep neural networks.

In addition, although “the basic principles were around 30 years ago, there are a few details in the way we do things now that are different from back then that allow us to train very large, very deep networks,” LeCun said. These algorithmic improvements include better nonlinear functions and methods for regularizing and normalizing input data to help the networks identify the data’s salient features.

Assessing Quality

An ongoing challenge is measuring the quality of a translation or a similar task. “As the problems that we solve get more and more advanced,” Cho said, “how can we even tell that a model is doing well?”

Even when automated systems are excellent on average, they occasionally can make mistakes that humans find shocking. “We call them stupid errors,” said Salim Roukos, an IBM Fellow at IBM’s Thomas J. Watson Research Center in New York. Fifteen years ago, he and his colleagues developed a computer-based translation metric called bilingual evaluation understudy, or BLEU, which is still widely used and helpful for quality control and tool improvement.

However, BLEU is not sensitive enough to individual misfires, Roukos said. “When we invented BLEU, we thought that in three or five years we would have a better metric, because it has the significant shortcoming which is it’s not very good at the level of a sentence.” Researchers therefore still turn to human evaluators of translation for the ultimate validation, although even humans have weaknesses, such as favoring translations that sound natural even if they are inaccurate.

Turning to Translation

In the past few years, such deep-learning techniques have produced “step-function” improvements for image analysis and for recognition of spoken speech, said Roukos. The improvement for translation, while significant, “has not been as great” so far, he said. “The jury is still out.”

Most implementations of translation employ two neural networks. The first, called the encoder, processes input text from one language to create an evolving fixed-length vector representation of the evolving input. A second “decoder” network monitors this vector to produce text in a different language. Typically, the encoder and decoder are trained as a pair for each choice of source and target language.

An additional critical element is the use of “attention,” which Cho said was “motivated from human translation.” As translation proceeds, based on what has been translated so far, this attention mechanism selects the most useful part of the text to translate next.

Attention models “really made a big difference,” said LeCun. “That’s what everybody is using right now.”

A Universal Language?

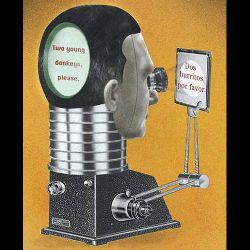

The separation of the encoder for one language from the decoder for another language raises an intriguing question about the vector that passes information between the two. As Google put it in a November 2016 blog post, “Is the system learning a common representation in which sentences with the same meaning are represented in similar ways regardless of language—i.e., an ‘interlingua’?” This possibility is reminiscent of the universal language envisioned by 17th-century polymath Gottfried Leibniz for formally denoting philosophical, mathematical, and scientific concepts.

“I would be careful about interpreting things in this sense,” cautioned Yoav Goldberg, a senior lecturer in computer science at Bar Ilan University in Israel. “There definitely is some representation of the sentence that can be used for translating it into different languages,” he said, but “it is a very opaque representation which we cannot understand at all.”

Goldberg suspects the representation is only capturing words and short phrases, “so there is some kind of a shared structure, but I think it’s still kind of a shallow mapping between these languages.”

Cho even imagines a more general “plug-and-play” capability in the future, for example with an encoder for images driving a decoder for language to automatically create captions in any language. “We are a long way from that,” he admitted.

Beyond Europe

Even at a practical level, the separation of encoder and decoder may help solve the problem of inadequate training data for many language pairs. The more than 100 languages supported by Google Translate would require data sets for more than 50,000 language pairs. Training encoders and decoders separately would vastly ease the burden. Google reported “reasonable” translations for two languages that had never been used as a pair for training.

Nonetheless, translations are definitely more difficult between languages from different families. Arabic, for example, relies heavily on word endings to convey meaning, so “the concept of a word” is not the same as in English, Roukos said. Goldberg noted the challenge arising from free word order in Hebrew. In these cases, preprocessing of the text, for example dividing Arabic words into multiple segments to facilitate mapping between languages, can significantly improve translation.

When dealing with languages from different linguistic families, preprocessing of the text can significantly improve translation.

In written Chinese, noted Victor Mair, a professor of Chinese language and literature at the University of Pennsylvania, there are no spaces separating words. In addition, he said that Chinese writing frequently includes words from Cantonese and other variants, as well as “classicisms” that may contain archaic vocabulary and grammar. “It confuses the computer no end,” Mair said, but he still finds the translation tools useful.

The new Google is “really pretty good” between Chinese and English, but Mair suspects that “there are some things they will never get right.”

Revolutions in Progress

In view of recent progress, however, “never” may not be so far away. Newer systems do not require spaces between words, Cho said, and “neural machine translation is making it easier for us to build a translation system” that makes use of the internal structure of complex Chinese characters, or the components of a compound German word.

A remaining question is how good neural systems can get at translation without exploiting traditional expert knowledge. “Knowing something about language in general, or properties of linguistic structure, definitely does help in the translation,” Goldberg said. “We shouldn’t be oblivious to the concepts of linguistics or expect them to be learned on their own.”

But the powerful new systems are continually challenging the importance of expertise.

Based on his experience with neural networks going back to Bell Labs in the 1980s, LeCun cited a fourth reason for the upswing in deep learning, besides fast computers, big data, and new ideas: “People started believing.” The reputation of neural nets as finicky, he said, began to break down with widespread sharing of code and methods, and a steady stream of solid results.

“First in speech recognition, then in image processing, and now in natural-language understanding and translation in particular, it really is a revolution,” LeCun said. “It’s an incredibly short time” for going from blue-sky research to industry standard. “All the large companies that have big language translation services are basically using neural nets.”

Byrne, M.

This DARPA Video Targeting AI Hype Is Necessary Viewing, Motherboard, https://motherboard.vice.com/en_us/article/this-darpa-video-targeting-ai-hype-is-necessary-viewing

A Neural Network for Machine Translation, at Production Scale, Google Research Blog, https://research.Googleblog.com/2016/09/a-neural-network-for-machine.html

Zero-Shot Translation with Google’s Multilingual Neural Machine Translation System, Google Research Blog, http://bit.ly/2nc469z

Join the Discussion (0)

Become a Member or Sign In to Post a Comment