Machine vision coupled with artificial intelligence (AI) has made great strides toward letting computers understand images. Thanks to deep learning, which processes information in a way analogous to the human brain, machine vision is doing everything from keeping self-driving cars on the right track to improving cancer diagnosis by examining biopsy slides or x-ray images. Now some researchers are going beyond what the human eye or a camera lens can see, using machine learning to watch what people are doing on the other side of a wall.

The technique relies on low-power radio frequency (RF) signals, which reflect off living tissue and metal but pass easily through wooden or plaster interior walls. AI can decipher those signals, not only to detect the presence of people, but also to see how they are moving, and even to predict the activity they are engaged in, from talking on a phone to brushing their teeth. With RF signals, “they can see in the dark. They can see through walls or furniture,” says Tianhong Li, a Ph.D. student in the Computer Science and Artificial Intelligence Laboratory at the Massachusetts Institute of Technology (MIT). He and fellow graduate student Lijie Fan helped develop a system to measure movement and, from that, to identify specific actions. “Our goal is to understand what people are doing,” Li says.

Such an understanding could come in handy for, say, monitoring elderly residents of assisted living facilities to see if they are having difficulty performing the tasks of daily living, or to detect if they have fallen. It could also be used to create “smart environments,” in which automated devices turn on lights or heat in a home or an office. A police force might use such a system to monitor the activity of a suspected terrorist or an armed robber.

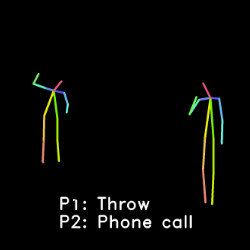

Figure. RF-Pose uses wireless signals to monitor people’s movements on the other side of a wall.

In living situations, one advantage of monitoring activity through walls with RF signals is that they are unable to resolve faces or see what a person is wearing, for instance, so they could afford more of a sense of privacy than studding the home with cameras, says Dina Katabi, the MIT professor leading the research. Another is that it does not require people to wear monitoring devices they might forget or be uncomfortable with; they just move through their homes as they normally would.

The MIT system uses a radio transmitter operating at between 5.4 GHz and 7.2GHz, at power levels 1,000 times lower than a Wi-Fi signal, so it should not cause interference. The RF transmissions bounce strongly off people because of all the water content of our bodies, but they also bounce off other objects in the environment to varying degrees, depending on the composition of the object. “You get this mass of reflections, signals bouncing off everything,” Katabi says.

So the first step is to teach the computer to identify which signals are coming from people. The team does this by recording a scene in both visible light and RF signals, and using the visual image to label the humans in the training data for a convolutional neural network (CNN), a type of deep learning algorithm that assigns weights to different aspects of an image. The signals also contain spatial information, because it takes a longer time for a signal to travel a longer distance. The CNN can capture that information and use it to separate two or more people in the same vicinity, although it can lead to errors if the people are very close or hugging, Katabi says.

Down to the Bones

The researchers also want to identify the actions people are taking based on how they move. To do that, the system takes the intermediate step of rendering the signals from the people into skeletons, simplified versions of human bodies that reduce them to essentially stick figures consisting of a head and limbs. Based on the relative positions of the lines in the stick figures, the computer can identify various actions—sitting down, bending to pick something up, waving, drinking, talking on the phone, and so on.

The team built on previous work done using AI to derive actions from video images. None of the existing action detection datasets, however, included RF information, so the team had to create its own. They found 30 volunteers, put them in 10 different environments—offices, hallways, and classrooms, for example—and asked them to randomly perform actions from a list of 35 possibilities. That produced 25 hours of data to train and test their computer model.

To provide more data, they derived skeletons from video that did not have any associated RF signals. Li says those skeletons were slightly different from those derived from the RF signals, so they had to make some adjustments. This approach, however, allowed the researchers to incorporate other information, such as a dataset called PKU-MMD, which contains nearly 20,000 actions from 51 categories performed by 66 people.

Movement in Space

Katabi is not the only researcher using RF to see through walls. Neal Patwari, a professor of computer science and engineering at Washington University in St. Louis, MO, has been working on RF sensing for years, although his work back in 2009 did not involve machine learning. His basic system uses both a radio transmitter and receiver. Bodies in the vicinity alter the strength of the signal being picked up by the receiver. A person directly crossing a line between the two blocks the signal strongly, someone nearby blocks it somewhat less, and when a person is far away, a signal scattering off their body changes the received signal a bit.

Patwari uses machine learning to teach the computer what particular blend of signals goes with a specific action. For instance, a particular set of measurements from the kitchen might mean someone was cooking. “You could have an algorithm that learned what the features are that correspond to cooking. And then I could know, for somebody living home alone, that they’re cooking at this time on this day and make sure that they’re not veering from their regular schedule too much,” he says. Such a system could be useful to monitor a person in the early stages of dementia, or who was at known risk for depression.

One issue, though, is that the measurements could change with changes in the environment, Patwari says. For instance, if a person had just done the grocery shopping, opening a refrigerator full of food might generate one set of RF signals. A few days later, when there was more empty space in the refrigerator, the signals could look different. The training data goes stale after a while.

Patwari currently is developing a system that uses RF signals to monitor a patient’s breathing through the walls of a psychiatric hospital, for instance. Many psychiatric hospitals have a policy to go into a patient’s room every 15 minutes at night to make sure they are sleeping normally and not trying to harm themselves. Such checks, of course, can wake the patients and interfere with them getting the sleep they need. An RF system can detect the movement of a patient’s chest and see that they are sleeping normally. Because it sees through walls, all the equipment and wires are outside the room, so the patient cannot damage them or use them to harm him/herself.

The Doppler Effect

Respiration can be detected by using RF signals as a sort of Doppler radar, seeing how the signal changes as it reflects off something moving back and forth, says Kevin Chetty, a professor in the department of security and crime science at University College London. His focus is on using machine learning to make sense of what he calls passive Wi-Fi, taking advantage of RF signals already in the environment including, potentially, the signals used by 5G cellphones. Any sort of movement in an RF field produces Doppler signals. “There’s a complex amalgamation of forward and back, left and right, different angles to different Doppler components,” Chetty says. “You get micro-Doppler signatures associated with different types of motion.”

Relying on RF signals already in the environment means there is no need for the type of calibration that a system like Patwari’s requires. On the other hand, it has to make do with whatever signals exist, instead of relying on a predictable signal like Katabi’s.

To train his action recognition system, Chetty has volunteers wear suits studded with LEDs, a system similar to that used in creating virtual reality scenarios. As the person moves, Chetty and his team collect both visual data recorded by cameras, and RF signals. The visual data provides ground truth for training the system to recognize actions based on the micro-Doppler signatures. It can be a complex set of measurements to decipher. For instance, the Doppler signal for the same action can look different depending on the angle from which it’s viewed.

Some RF sensing technology is already in use. Former students of Patwari’s, for instance, formed a company called Xandem that sells sensors that use RF to detect human presence and motion. Systems using machine learning to identify actions, for use in healthcare or smart environments, still require further development. “We’re not there yet, but we’re working on it,” Chetty says.

Li, T., Fan, L., Zhao, M., Liu, Y., Katabi, D

Making the Invisible Visible: Action Recognition Through Walls and Occlusions, 2019, arxiv.org/abs/1909.09300

Al-Husseiny, A., Patwari, N.

Unsupervised Learning of Signal Strength Models for Device-Free Localization, 2019 IEEE 20th International Symposium on “A World of Wireless, Mobile and Multimedia Networks” DOI: 10.1109/WoWMoM.2019.8792970

Li W, Piechocki R, Woodbridge K, Chetty K

WiFi Sensing, Physical Activity, Modified Cross Ambiguity Function, Stand-Alone WiFi Device, 2019, IEEE Global Communications Conference, https://discovery.ucl.ac.uk/id/eprint/10084427/

Wang, X, Wang, X., Mao, S.

RF Sensing in the Internet of Things: A General Deep Learning Framework, 2018, IEEE Communications Magazine, DOI 10.1109/MCOM.2018.1701277

Dina Katabi, MIT China Summit

https://www.youtube.com/watch?v=9nVZwLqG6aI

Researchers Use Wi-Fi to See Through Walls https://www.youtube.com/watch?v=fGZzNZnYIHo

AI Senses People Through Walls https://www.youtube.com/watch?time_continue=59&v=HgDdaMy8KNE&feature=emb_logo

Join the Discussion (0)

Become a Member or Sign In to Post a Comment