Imagine you could capture a 3D scene and later revisit that scene from different viewpoints, perhaps seeing the action as it unfolded at capture time. We are accustomed to snapping 2D photographs or videos, which are then compactly stored on our phones or in the cloud. In contrast, the corresponding process for 3D capture is quite cumbersome. Traditionally, it involves taking lots of images of the scene, applying photogrammetry techniques to reconstruct a dense surface reconstruction, and then cleaning up manually. However, the results can be spectacular and have been used to convey a sense of place not otherwise possible with 2D photography, for example, in recent interactive features from the New York Times.

Recently, many researchers have investigated whether the revolution in deep neural networks can put these same capabilities within reach of everyone and make it as easy as snapping a 2D picture. One technique in particular—neural volume rendering—exploded onto the scene in 2020, triggered by the following impressive paper on Neural Radiance Fields, or NeRF. This novel method takes multiple images as input and produces a compact representation of the 3D scene in the form of a deep, fully connected neural network, the weights of which can be stored in a file not much bigger than a typical compressed image. This representation can then be used to render arbitrary views of the scene with surprising accuracy and detail.

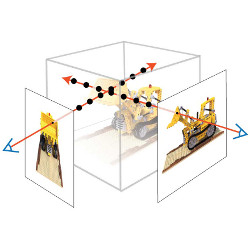

Neural volume rendering refers to deep image or video generation methods that trace rays into the scene and take an integral of some sort over the length of the ray. Typically, a fully connected neural network encodes a function from the 3D coordinates on the ray to quantities like density and color, which are then integrated to yield an image. An early version of neural volume rendering for view synthesis was introduced in the paper by Lombardi et al.,2 regressing a 3D volume of density and color, albeit still in a voxel-based representation.

The immediate precursors to NeRF are the approaches that use a neural network to define an implicit 3D surface representation. Many 3D-aware image generation approaches used voxels, meshes, point clouds, or other representations. But at CVPR 2019, no less than three papers introduced the use of neural nets as scalar function approximators to define occupancy and/or signed distance functions: occupancy networks,3 IM-NET,1 and DeepSDF.4 To date, many papers have built on top of the implicit function idea.

However, the NeRF paper is the one that got everyone talking. In essence, the by Mildenhall et al. take the Deep-SDF architecture but directly regress density, color, and use. They then use an easily differentiable numerical integration method to approximate a true volumetric rendering step. A NeRF model stores a volumetric scene representation as the weights of an MLP, trained on images with known pose. New views are rendered by integrating the density and color at regular intervals along each viewing ray.

The NeRF paper first appeared on Arxiv in March 2020, sparking an explosion of interest, both because of the quality of the synthesized views and the incredible detail in the visualized depth maps. Arguably, the impact of the paper lies in its simplicity: a multilayer perceptron taking in a 5D coordinate and outputting density and color. There are some bells and whistles, notably positional encoding and a stratified sampling scheme, but many researchers were impressed that such a simple architecture could yield such impressive results. Another reason is the "vanilla NeRF" paper provided many opportunities to improve upon it. Indeed, it is slow, both for training and rendering; it can only represent static scenes; it "bakes in" lighting; and, finally, it is scene specific, that is, it does not generalize.

In the fast-moving computer vision community, these opportunities were almost immediately capitalized on. Several projects/papers aim at improving the rather slow training and rendering time of the original NeRF paper, and many more efforts focus on dynamic scenes, using a variety of schemes, bringing arbitrary viewpoint video rendering withing reach. Another dimension in which NeRF-style methods have been augmented is in how to deal with lighting, typically through latent codes that can be used to relight a scene, whereas other researchers use latent codes for generalization over shapes so training can be done from fewer images. Finally, an exciting new area of interest is how to support composition to enable more complex, dynamic scenes.

In summary, neural volume rendering has seen an explosion of interest in the community, and it is to be expected that the fruits of which will soon make it to a smartphone near you.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment