The network interface cards (NICs) of modern computers are changing to adapt to faster data rates and to help with the scaling issues of general-purpose CPU technologies. Among the ongoing innovations, the inclusion of programmable accelerators on the NIC’s data path is particularly interesting, since it provides the opportunity to offload some of the CPU’s network packet processing tasks to the accelerator. Given the strict latency constraints of packet processing tasks, accelerators are often implemented leveraging platforms such as Field-Programmable Gate Arrays (FPGAs). FPGAs can be re-programmed after deployment, to adapt to changing application requirements, and can achieve both high throughput and low latency when implementing packet processing tasks. However, they have limited resources that may need to be shared among diverse applications, and programming them is difficult and requires hardware design expertise.

We present hXDP, a solution to run on FPGAs software packet processing tasks described with the eBPF technology and targeting the Linux’s eXpress Data Path. hXDP uses only a fraction of the available FPGA resources, while matching the performance of high-end CPUs. The iterative execution model of eBPF is not a good fit for FPGA accelerators. Nonetheless, we show that many of the instructions of an eBPF program can be compressed, parallelized, or completely removed, when targeting a purpose-built FPGA design, thereby significantly improving performance.

We implement hXDP on an FPGA NIC and evaluate it running real-world unmodified eBPF programs. Our implementation runs at 156.25MHz and uses about 15% of the FPGA resources. Despite these modest requirements, it can run dynamically loaded programs, achieves the packet processing throughput of a high-end CPU core, and provides a 10X lower packet forwarding latency.

1. Introduction

Computers in datacenter and telecom operator networks employ a large fraction of their CPU’s resources to process network traffic coming from their network interface cards (NICs). Enforcing security, for example, using a firewall function, monitoring network-level performance, and routing packets toward their intended destinations are just few examples of the tasks being performed by these systems. With NICs’ port speeds growing beyond 100Gigabit/s (Gbps), and given the limitations in further scaling CPUs’ performance,17 new architectural solutions are being introduced to handle these growing workloads.

The inclusion of programmable accelerators on the NIC is one of the promising approaches to offload the resource-intensive packet processing tasks from the CPU, thereby saving its precious cycles for tasks that cannot be performed elsewhere. Nonetheless, achieving programmability for high-performance network packet processing tasks is an open research problem, with solutions exploring different areas of the solution space that compromise in different ways between performance, flexibility, and ease-of-use.25 As a result, today’s accelerators are implemented using different technologies, including Application-Specific Integrated Circuits (ASICs), Field-Programmable Gate Arrays (FPGAs), and many-core System-on-Chip.

FPGA-based NICs are especially interesting since they provide good performance together with a high degree of flexibility, which enables programmers to define virtually any function, provided that it fits in the available hardware resources. Compared to other accelerators for NICs, such as network processing ASICs4 or many-core System-on-Chip SmartNICs,27 the FPGA NICs flexibility gives also the additional benefit of supporting diverse accelerators for a wider set of applications. For instance, Microsoft employs them in datacenters for both network and machine learning tasks,8, 11 and in telecom networks, they are used also to perform radio signal processing tasks.20, 31, 26 Nonetheless, programming FPGAs is difficult, often requiring the establishment of a dedicated team of hardware specialists,11 which interacts with software and operating system developers to integrate the offloading solution with the system. Furthermore, previous work that simplifies network functions programming on FPGAs assumes that a large share of the FPGA is fully dedicated to packet processing,1, 30, 28 reducing the ability to share the FPGA with other accelerators.

Our goal is to provide a more general and easy-to-use solution to program packet processing on FPGA NICs, using little FPGA resources, while seamlessly integrating with existing operating systems. We build toward this goal by presenting hXDP, a set of technologies that enables the efficient execution of the Linux’s eXpress Data Path (XDP)19 on FPGA. XDP leverages the eBPF technology to provide secure programmable packet processing within the Linux kernel, and it is widely used by the Linux’s community in production environments. hXDP provides full XDP support, allowing users to dynamically load and run their unmodified XDP programs on the FPGA.

The challenge is therefore to run XDP programs effectively on FPGA, since the eBPF technology is originally designed for sequential execution on a high-performance RISC-like register machine. That is, eBPF is designed for server CPUs with high clock frequency and the ability to execute many of the sequential eBPF instructions per second. Instead, FPGAs favor a widely parallel execution model with clock frequencies that are 5–10X lower than those of high-end CPUs. As such, a straightforward implementation of the eBPF iterative execution model on FPGA is likely to provide low packet forwarding performance. Furthermore, the hXDP design should implement arbitrary XDP programs while using little hardware resources, in order to keep FPGA’s resources free for other accelerators.

We address the challenge performing a detailed analysis of the eBPF Instruction Set Architecture (ISA) and of the existing XDP programs, to reveal and take advantage of opportunities for optimization. First, we identify eBPF instructions that can be safely removed, when not running in the Linux kernel context. For instance, we remove data boundary checks and variable zero-ing instructions by providing targeted hardware support. Second, we define extensions to the eBPF ISA to introduce 3-operand instructions, new 6B load/store instructions, and a new parameterized program exit instruction. Finally, we leverage eBPF instruction-level parallelism, performing a static analysis of the programs at compile time, which allows us to execute several eBPF instructions in parallel. We design hXDP to implement these optimizations, and to take full advantage of the on-NIC execution environment, for example, avoiding unnecessary PCIe transfers. Our design includes the following: (i) a compiler to translate XDP programs’ bytecode to the extended hXDP ISA; (ii) a self-contained FPGA IP Core module that implements the extended ISA alongside several other low-level optimizations; (iii) and the toolchain required to dynamically load and interact with XDP programs running on the FPGA NIC.

To evaluate hXDP, we provide an open-source implementation for the NetFPGA.32 We test our implementation using the XDP example programs provided by the Linux source code and using two real-world applications: a simple stateful firewall and Facebook’s Katran load balancer. hXDP can match the packet forwarding throughput of a multi-GHz server CPU core, while providing a 10X lower forwarding latency. This is achieved despite the low clock frequency of our prototype (156MHz) and using less than 15% of the FPGA resources.

hXDP sources are at https://axbryd.io/technology.

2. Concept and Overview

Our main goal is to provide the ability to run XDP programs efficiently on FPGA NICs, while using little FPGA’s hardware resources (see Figure 1).

Figure 1. The hXDP concept. hXDP provides an easy-to-use network accelerator that shares the FPGA NIC resources with other application-specific accelerators.

A little use of the FPGA resources is especially important, since it enables extra consolidation by packing different application accelerators on the same FPGA.

The choice of supporting XDP is instead motivated by a twofold benefit brought by the technology: It readily enables NIC offloading for already deployed XDP programs, and it provides an on-NIC programming model that is already familiar to a large community of Linux programmers; thus, developers do not need to learn new programming paradigms, such as those introduced by P43 or FlowBlaze.28

Non-Goals: unlike previous work targeting FPGA NICs,30,1,28 hXDP does not assume the FPGA to be dedicated to network processing tasks. Because of that, hXDP adopts an iterative processing model, which is in stark contrast to the pipelined processing model supported by previous work. The iterative model requires a fixed amount of resources, no matter the complexity of the program being implemented. Instead, in the pipeline model the resource requirement is dependent on the implemented program complexity, since programs are effectively “unrolled” in the FPGA. In fact, hXDP provides dynamic runtime loading of XDP programs, whereas solutions such as P4->NetFPGA30 or FlowBlaze need to often load a new FPGA bitstream when changing application. As such, hXDP is not designed to be faster at processing packets than those designs. Instead, hXDP aims at freeing precious CPU resources, which can then be dedicated to workloads that cannot run elsewhere, while providing similar or better performance than the CPU.

Likewise, hXDP cannot be directly compared to SmartNICs dedicated to network processing. Such NICs’ resources are largely, often exclusively, devoted to network packet processing. Instead, hXDP leverages only a fraction of an FPGA resources to add packet processing with good performance, alongside other application-specific accelerators, which share the same chip’s resources.

Requirements: given the above discussion, we can derive three high-level requirements for hXDP:

- It should execute unmodified compiled XDP programs and support the XDP frameworks’ toolchain, for example, dynamic program loading and userspace access to maps;

- It should provide packet processing performance at least comparable to that of a high-end CPU core;

- It should require a small amount of the FPGA’s hardware resources.

Before presenting a more detailed description of the hXDP concept, we now give a brief background about XDP.

XDP allows programmers to inject programs at the NIC driver level, so that such programs are executed before a network packet is passed to the Linux’s network stack. XDP programs are based on the Linux’s eBPF technology. eBPF provides an in-kernel virtual machine for the sandboxed execution of small programs within the kernel context. In its current version, the eBPF virtual machine has 11 64b registers: r0 holds the return value from in-kernel functions and programs, r1 – r5 are used to store arguments that are passed to in-kernel functions, r6 – r9 are registers that are preserved during function calls, and r10 stores the frame pointer to access the stack. The eBPF virtual machine has a well-defined ISA composed of more than 100 fixed length instructions (64b). Programmers usually write an eBPF program using the C language with some restrictions, which simplify the static verification of the program.

eBPF programs can also access kernel memory areas called maps, that is, kernel memory locations that essentially resemble tables. For instance, eBPF programs can use maps to implement arrays and hash tables. An eBPF program can interact with map’s locations by means of pointer deference, for un-structured data access, or by invoking specific helper functions for structured data access, for example, a lookup on a map configured as a hash table. Maps are especially important since they are the only mean to keep state across program executions, and to share information with other eBPF programs and with programs running in user space.

To grasp an intuitive understanding of the design challenge involved in supporting XDP on FPGA, we now consider the example of an XDP program that implements a simple stateful firewall for checking the establishment of bi-directional TCP or UDP flows. A C program describing this simple firewall function is compiled to 71 eBPF instructions.

We can build a rough idea of the potential best-case speed of this function running on an FPGA-based eBPF executor, assuming that each eBPF instruction requires 1 clock cycle to be executed, that clock cycles are not spent for any other operation, and that the FPGA has a 156MHz clock rate, which is common in FPGA NICs.32 In such a case, a naive FPGA implementation that implements the sequential eBPF executor would provide a maximum throughput of 2.8 Million packets per second (Mpps), under optimistic assumptions, for example, assuming no additional overheads due to queues management. For comparison, when running on a single core of a high-end server CPU clocked at 3.7GHz, and including also operating system overhead and the PCIe transfer costs, the XDP simple firewall program achieves a throughput of 7.4Mpps.a Since it is often undesired or not possible to increase the FPGA clock rate; for example, due to power constraints, in the lack of other solutions the FPGA-based executor would be 2–3X slower than the CPU core.

Furthermore, existing solutions to speed-up sequential code execution, for example, superscalar architectures, are too expensive in terms of hardware resources to be adopted in this case. In fact, in a superscalar architecture the speed-up is achieved leveraging instruction-level parallelism at runtime. However, the complexity of the hardware required to do so grows exponentially with the number of instructions being checked for parallel execution. This rules out re-using general-purpose soft-core designs, such as those based on RISC-V.16, 14

hXDP addresses the outlined challenge by taking a software-hardware co-design approach. In particular, hXDP provides both a compiler and the corresponding hardware module. The compiler takes advantage of eBPF ISA optimization opportunities, leveraging hXDP’s hardware module features that are introduced to simplify the exploitation of such opportunities. Effectively, we design a new ISA that extends the eBPF ISA, specifically targeting the execution of XDP programs.

The compiler optimizations perform transformations at the eBPF instruction level: remove unnecessary instructions; replace instructions with newly defined more concise instructions; and parallelize instruction execution. All the optimizations are performed at compile time, moving most of the complexity to the software compiler, thereby reducing the target hardware complexity. Accordingly, the hXDP hardware module implements an infrastructure to run up to 4 instructions in parallel, implementing a Very Long Instruction Word (VLIW) soft processor. The VLIW soft processor does not provide any runtime program optimization, for example, branch prediction, instruction reordering. We rely entirely on the compiler to optimize XDP programs for high-performance execution, thereby freeing the hardware module of complex mechanisms that would use more hardware resources.

Ultimately, the hXDP hardware component is deployed as a self-contained IP core module to the FPGA. The module can be interfaced with other processing modules if needed, or just placed as a bump-in-the-wire between the NIC’s port and its PCIe driver toward the host system. The hXDP software toolchain, which includes the compiler, provides all the machinery to use hXDP within a Linux operating system.

From a programmer perspective, a compiled eBPF program could be therefore interchangeably executed in-kernel or on the FPGA (see Figure 2).

Figure 2. An overview of the XDP workflow and architecture, including the contribution of this article.

3. hXDP Compiler

We now describe the hXDP instruction-level optimizations and the compiler design to implement them.

Instructions reduction: the eBPF technology is designed to enable execution within the Linux kernel, for which it requires programs to include a number of extra instructions, which are then checked by the kernel’s verifier. When targeting a dedicated eBPF executor implemented on FPGA, most of such instructions could be safely removed, or they can be replaced by cheaper embedded hardware checks. Two relevant examples are instructions for memory boundary checks and memory zero-ing (Figure 3).

Figure 3. Examples of instructions removed by hXDP.

Boundary checks are required by the eBPF verifier to ensure programs only read valid memory locations, whenever a pointer operation is involved. In hXDP, we can safely remove these instructions, implementing the check directly in hardware.

Zero-ing is the process of setting a newly created variable to zero, and it is a common operation performed by programmers both for safety and for ensuring correct execution of their programs. A dedicated FPGA executor can provide hard guarantees that all relevant memory areas are zero-ed at program start, therefore making the explicit zero-ing of variables during initialization redundant.

ISA extension: to effectively reduce the number of instructions, we define an ISA that enables a more concise description of the program. Here, there are two factors at play to our advantage. First, we can extend the ISA without accounting for constraints related to the need to support efficient Just-In-Time compilation. Second, our eBPF programs are part of XDP applications, and as such, we can expect packet processing as the main program task. Leveraging these two facts we define a new ISA that changes in three main ways the original eBPF ISA.

Operands number. The first significant change has to deal with the inclusion of three-operand operations, in place of eBPF’s two-operand ones. Here, we believe that the eBPF’s ISA selection of two-operand operations was mainly dictated by the assumption that an X86 ISA would be the final compilation target. Instead, using three-operand instructions allows us to implement an operation that would normally need two instructions with just a single instruction.

Load/store size. The eBPF ISA includes byte-aligned memory load/store operations, with sizes of 1B, 2B, 4B, and 8B. While these instructions are effective for most cases, we noticed that during packet processing the use of 6B load/store can reduce the number of instructions in common cases. In fact, 6B is the size of an Ethernet MAC address, which is a commonly accessed field. Extending the eBPF ISA with 6B load/store instructions often halves the required instructions.

Parameterized exit. The end of an eBPF program is marked by the exit instruction. In XDP, programs set the r0 to a value corresponding to the desired forwarding action (that is, DROP, TX); then, when a program exits, the framework checks the r0 register to finally perform the forwarding action. While this extension of the ISA only saves one (runtime) instruction per program, as we will see in Section 4, it will also enable more significant hardware optimizations (Figure 4).

Figure 4. Examples of hXDP ISA extensions.

Instruction parallelism. Finally, we explore the opportunity to perform parallel processing of an eBPF program’s instructions. Since our target is to keep the hardware design as simple as possible, we do not introduce runtime mechanisms for that and instead perform only a static analysis of the instruction-level parallelism of eBPF programs at compile time. We therefore design a custom compiler to implement the optimizations outlined in this section and to transform XDP programs into a schedule of parallel instructions that can run with hXDP. The compiler analyzes eBPF bytecode, considering both (i) the Data & Control Flow dependencies and (ii) the hardware constraints of the target platform. The schedule can be visualized as a virtually infinite set of rows, each with multiple available spots, which need to be filled with instructions. The number of spots corresponds to the number of execution lanes of the target executor. The compiler fits the given XDP program’s instructions in the smallest number of rows, while respecting the three Bernstein conditions that ensure the ability to run the selected instructions in parallel.2

4. Hardware Module

We design hXDP as an independent IP core, which can be added to a larger FPGA design as needed. Our IP core comprises the elements to execute all the XDP functional blocks on the NIC, including helper functions and maps.

4.1. Architecture and components

4.1. Architecture and components

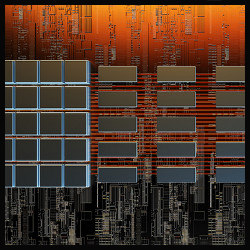

The hXDP hardware design includes five components (see Figure 5): the Programmable Input Queue (PIQ); the Active Packet Selector (APS); the Sephirot processing core; the Helper Functions Module (HF); and the Memory Maps Module (MM). All the modules work in the same clock frequency domain. Incoming data is received by the PIQ. The APS reads a new packet from the PIQ into its internal packet buffer. In doing so, the APS provides a byte-aligned access to the packet data through a data bus, which Sephirot uses to selectively read/write the packet content. When the APS makes a packet available to the Sephirot core, the execution of a loaded eBPF program starts. Instructions are entirely executed within Sephirot, using four parallel execution lanes, unless they call a helper function or read/write to maps. In such cases, the corresponding modules are accessed using the helper bus and the data bus, respectively. We detail the architecture’s core component, that is, the Sephirot eBPF processor, next.

Figure 5. The logic architecture of the hXDP hardware design.

Sephirot is a VLIW processor with four parallel lanes that execute eBPF instructions. Sephirot is designed as a pipeline of four stages: instruction fetch (IF); instruction decode (ID); instruction execute (IE); and commit. A program is stored in a dedicated instruction memory, from which Sephirot fetches the instructions in order. The processor has another dedicated memory area to implement the program’s stack, which is 512B in size, and 11 64b registers stored in the register file. These memory and register locations match one-to-one the eBPF virtual machine specification. Sephirot begins execution when the APS has a new packet ready for processing, and it gives the processor start signal.

On processor start (IF stage), a VLIW instruction is read and the 4 extended eBPF instructions that compose it are statically assigned to their respective execution lanes. In this stage, the operands of the instructions are pre-fetched from the register file. The remaining 3 pipeline stages are performed in parallel by the four execution lanes. During ID, memory locations are pre-fetched, if any of the eBPF instructions is a load, while at the IE stage the relevant subunit is activated, using the related pre-fetched values. The subunits are the Arithmetic and Logic Unit (ALU), the Memory Access Unit, and the Control Unit. The ALU implements all the operations described by the eBPF ISA, with the notable difference that it is capable of performing operations on three operands. The memory access unit abstracts the access to the different memory areas, that is, the stack, the packet data stored in the APS, and the maps memory. The control unit provides the logic to modify the program counter, for example, to perform a jump and to invoke helper functions. Finally, during the commit stage the results of the IE phase are stored back to the register file, or to one of the memory areas. Sephirot terminates execution when it finds an exit instruction, in which case it signals to the APS the packet forwarding decision.

We now list a subset of notable architectural optimizations we applied to our design.

Program state self-reset. As we have seen in Section 3, eBPF programs may perform zero-ing of the variables they are going to use. We provide automatic reset of the stack and of the registers at program initialization. This is an inexpensive feature in hardware, which improves security9 and allows us to remove any such zero-ing instruction from the program.

Parallel branching. The presence of branch instructions may cause performance problems with architectures that lack branch prediction, and speculative and out-of-order execution. For Sephirot, this forces a serialization of the branch instructions. However, in XDP programs there are often series of branches in close sequence, especially during header parsing. We enabled the parallel execution of such branches, establishing a priority ordering of the Sephirot‘s lanes. That is, all the branch instructions are executed in parallel by the VLIW’s lanes. If more than one branch is taken, the highest priority one is selected to update the program counter. The compiler takes that into account when scheduling instructions, ordering the branch instructions accordingly.b

Early processor exit. The processor stops when an exit instruction is executed. The exit instruction is recognized during the IF phase, which allows us to stop the processor pipeline early and save the three remaining clock cycles. This optimization improves also the performance gain obtained by extending the ISA with parameterized exit instructions, as described in Section 3. In fact, XDP programs usually perform a move of a value to r0, to define the forwarding action, before calling an exit. Setting a value to a register always needs to traverse the entire Sephirot pipeline. Instead, with a parameterized exit we remove the need to assign a value to r0, since the value is embedded in a newly defined exit instruction.

We prototyped hXDP using the NetFPGA,32 a board embedding 4 10Gb ports and a Xilinx Virtex7 FPGA. The hXDP implementation uses a frame size of 32B and is clocked at 156.25MHz. Both settings come from the standard configuration of the NetFPGA reference NIC design.

The hXDP FPGA IP core takes 9.91% of the FPGA logic resources, 2.09% of the register resources, and 3.4% of the FPGA’s available BRAM. The APS and Sephirot are the components that need more logic resources, since they are the most complex ones. Interestingly, even somewhat complex helper functions, for example, a helper function to implement a hashmap lookup, have just a minor contribution in terms of required logic, which confirms that including them in the hardware design comes at little cost while providing good performance benefits. When including the NetFPGA’s reference NIC design, that is, to build a fully functional FPGA-based NIC, the overall occupation of resources grows to 18.53%, 7.3%, and 14.63% for logic, registers, and BRAM, respectively. This is a relatively low occupation level, which enables the use of the largest share of the FPGA for other accelerators.

5. Evaluation

We use a selection of the Linux’s XDP example applications and two real-world applications to perform the hXDP evaluation. The Linux examples are described in Table 1. The real-world applications are the simple firewall described in Section 2 and the Facebook’s Katran server load balancer.10 Katran is a high-performance software load balancer that translates virtual addresses to actual server addresses using a weighted scheduling policy and providing per-flow consistency. Furthermore, Katran collects several flow metrics and performs IPinIP packet encapsulation.

Table 1. Tested Linux XDP example programs.

Using these applications, we perform an evaluation of the impact of the compiler optimizations on the programs’ number of instructions and the achieved level of parallelism. Then, we evaluate the performance of our NetFPGA implementation. We use the microbenchmarks also to compare the hXDP prototype performance with a Netronome NFP4000 SmartNIC. Although the two devices target different deployment scenarios, this can provide further insights on the effect of the hXDP design choices. Unfortunately, the NFP4000 offers only limited eBPF support, which does not allow us to run a complete evaluation. We further include a comparison of hXDP to other FPGA NIC programming solutions, before concluding the section with a brief discussion of the evaluation results.

Compiler. Figure 6 shows the number of VLIW instructions produced by the compiler. We show the reduction provided by each optimization as a stacked column and report also the number of x86 instructions, which result as output of the Linux’s eBPF JIT compiler. In this figure, we report the gain for instruction parallelization, and the additional gain from code movement, which is the gain obtained by anticipating instructions from control equivalent blocks. As we can see, the compiler is capable of providing a number of VLIW instructions that is often 2–3x smaller than the original program’s number of instructions. Notice that, by contrast, the output of the JIT compiler for x86 usually grows the number of instructions.c

Figure 6. Number of VLIW instructions, and impact of optimizations on its reduction.

Instructions per cycle. We compare the parallelization level obtained at compile time by hXDP, with the runtime parallelization performed by the x86 CPU core. Table 2 shows that the static hXDP parallelization achieves often a parallelization level as good as the one achieved by the complex x86 runtime machinery.d

Table 2. Programs’ number of instructions, x86 runtime instruction-per-cycle (IPC) and hXDP static IPC mean rates.

Hardware performance. We compare hXDP with XDP running on a server machine and with the XDP offloading implementation provided by a SoC-based Netronome NFP4000 SmartNIC. The NFP4000 has 60 programmable network processing cores (called microengines), clocked at 800MHz. The server machine is equipped with an Intel Xeon E5-1630 v3 @3.70GHz, an Intel XL710 40GbE NIC, and running Linux v.5.6.4 with the i40e Intel NIC drivers. During the tests, we use different CPU frequencies, that is, 1.2GHz, 2.1GHz, and 3.7GHz, to cover a larger spectrum of deployment scenarios. In fact, many deployments favor CPUs with lower frequencies and a higher number of cores.15 We use a DPDK packet generator to perform throughput and latency measurements. The packet generator is capable of generating a 40Gbps throughput with any packet size and it is connected back-to-back with the system-under-test, that is, the hXDP prototype running on the NetFPGA, the Netronome SmartNIC, or the Linux server running XDP. Delay measurements are performed using hardware packet timestamping at the traffic generator’s NIC and measure the round-trip time. Unless differently stated, all the tests are performed using packets with size 64B belonging to a single network flow. This is a challenging workload for the systems under test.

Applications performance. In Section 2, we mentioned that an optimistic upper-bound for the simple firewall performance would have been 2.8Mpps. When using hXDP with all the compiler and hardware optimizations described in this paper, the same application achieves a throughput of 6.53Mpps, as shown in Figure 7. This is only 12% slower than the same application running on a powerful x86 CPU core clocked at 3.7GHz and 55% faster than the same CPU core clocked at 2.1GHz. In terms of latency, hXDP provides about 10x lower packet processing latency, for all packet sizes (see Figure 8). This is the case since hXDP avoids crossing the PCIe bus and has no software-related overheads. We omit latency results for the remaining applications, since they are not significantly different. While we are unable to run the simple firewall application using the Netronome’s eBPF implementation, Figure 8 shows also the forwarding latency of the Netronome NFP4000 (nfp label) when programmed with an XDP program that only performs packet forwarding. Even in this case, we can see that hXDP provides a lower forwarding latency, especially for packets of smaller sizes.

Figure 7. Throughput for real-world applications. hXDP is faster than a high-end CPU core clocked at over 2GHz.

Figure 8. Packet forwarding latency for different packet sizes.

When measuring Katran we find that hXDP is instead 38% slower than the x86 core at 3.7GHz and only 8% faster than the same core clocked at 2.1GHz. The reason for this relatively worse hXDP performance is the overall program length. Katran’s program has many instructions, as such executors with a very high clock frequency are advantaged, since they can run more instructions per second. However, notice the clock frequencies of the CPUs deployed at, for example, Facebook’s datacenters15 have frequencies close to 2.1GHz, favoring many-core deployments in place of high-frequency ones. hXDP clocked at 156MHz is still capable of outperforming a CPU core clocked at that frequency.

Linux examples. We finally measure the performance of the Linux’s XDP examples listed in Table 1. These applications allow us to better understand the hXDP performance with programs of different types (see Figure 9). We can identify three categories of programs. First, programs that forward packets to the NIC interfaces are faster when running on hXDP. These programs do not pass packets to the host system, and thus, they can live entirely in the NIC. For such programs, hXDP usually performs at least as good as a single x86 core clocked at 2.1GHz. In fact, processing XDP on the host system incurs the additional PCIe transfer overhead to send the packet back to the NIC. Second, programs that always drop packets are usually faster on x86, unless the processor has a low frequency, such as 1.2GHz. Here, it should be noted that such programs are rather uncommon, for example, programs used to gather network traffic statistics receiving packets from a network tap. Finally, programs that are long, for example, tx_ip_tunnel has 283 instructions, are faster on x86. Like we noticed in the case of Katran, with longer programs the hXDP’s implementation low clock frequency can become problematic.

Figure 9. Throughput of Linux’s XDP programs. hXDP is faster for programs that perform TX or redirection.

5.1.1. Comparison to other FPGA solutions. hXDP provides a more flexible programming model than previous work for FPGA NIC programming. However, in some cases, simpler network functions implemented with hXDP could be also implemented using other programming approaches for FPGA NICs, while keeping functional equivalence. One such example is the simple firewall presented in this article, which is supported also by FlowBlaze.28

Throughput. Leaving aside the cost of reimplementing the function using the FlowBlaze abstraction, we can generally expect hXDP to be slower than FlowBlaze at processing packets. In fact, in the simple firewall case, FlowBlaze can forward about 60Mpps vs. 6.5Mpps of hXDP. The FlowBlaze design is clocked at 156MHz, like hXDP, and its better performance is due to the high level of specialization. FlowBlaze is optimized to process only packet headers, using statically defined functions. This requires loading a new bitstream on the FPGA when the function changes, but it enables the system to achieve the reported high performance.e Conversely, hXDP has to pay a significant cost to provide full XDP compatibility, including dynamic network function programmability and processing of both packet headers and payloads.

Hardware resources. A second important difference is the amount of hardware resources required by the two approaches. hXDP needs about 18% of the NetFPGA logic resources, independently from the particular network function being implemented. Conversely, FlowBlaze implements a packet processing pipeline, with each of the pipeline’s stage requiring about 16% of the NetFPGA’s logic resources. For example, the simple firewall function implementation requires two FlowBlaze pipeline’s stages. More complex functions, such as a load balancer, may require 4 or 5 stages, depending on the implemented load-balancing logic.12

In summary, the FlowBlaze’s pipeline leverages hardware parallelism to achieve high performance. However, it has the disadvantage of often requiring more hardware resources than a sequential executor, like the one implemented by hXDP. Because of that, hXDP is especially helpful in scenarios where a small amount of FPGA resources is available, for example, when sharing the FPGA among different accelerators.

Suitable applications. hXDP can run XDP programs with no modifications; however, the results presented in this section show that hXDP is especially suitable for programs that can process packets entirely on the NIC, and which are no more than a few 10s of VLIW instructions long. This is a common observation made also for other offloading solutions.18

FPGA sharing. At the same time, hXDP succeeds in using little FPGA resources, leaving space for other accelerators. For instance, we could co-locate on the same FPGA several instances of the VLDA accelerator design for neural networks presented in Chen.7 Here, one important note is about the use of memory resources (BRAM). Some XDP programs may need larger map memories. It should be clear that the memory area dedicated to maps reduces the memory resources available to other accelerators on the FPGA. As such, the memory requirements of XDP programs, which are anyway known at compile time, are another important factor to consider when taking program offloading decisions.

6. Related Work

NIC programming. AccelNet11 is a match-action offloading engine used in large cloud datacenters to offload virtual switching and firewalling functions, implemented on top of the Catapult FPGA NIC.6 FlexNIC23 is a design based on the RMT4 architecture, which provides a flexible network DMA interface used by operating systems and applications to offload stateless packet parsing and classification. P4->NetFPGA1 and P4FPGA30 provide high-level synthesis from the P43 domain-specific language to an FPGA NIC platform. FlowBlaze28 implements a finite-state machine abstraction using match-action tables on an FPGA NIC, to implement simple but high-performance network functions. Emu29 uses high-level synthesis to implement functions described in C# on the NetFPGA. Compared to these works, instead of match-action or higher-level abstractions, hXDP leverages abstractions defined by the Linux’s kernel and implements network functions described using the eBPF ISA.

The Netronome SmartNICs implement a limited form of eBPF offloading.24 Unlike hXDP that implements a solution specifically targeted to XDP programs, the Netronome solution is added on top of their network processor as an afterthought, and therefore, it is not specialized for the execution of XDP programs.

NIC hardware. Previous work presenting VLIW core designs for FPGAs did not focus on network processing.21, 22 Brunella5 is the closest to hXDP. It employs a non-specialized MIPS-based ISA and a VLIW architecture for packet processing. hXDP has an ISA design specifically targeted to network processing using the XDP abstractions. Forencich13 presents an open source 100Gbps FPGA NIC design. hXDP can be integrated in such design to implement an open source FPGA NIC with XDP offloading support.

7. Conclusion

This paper presented the design and implementation of hXDP, a system to run Linux’s XDP programs on FPGA NICs. hXDP can run unmodified XDP programs on FPGA matching the performance of a high-end x86 CPU core clocked at more than 2GHz. Designing and implementing hXDP required a significant research and engineering effort, which involved the design of a processor and its compiler, and while we believe that the performance results for a design running at 156MHz are already remarkable, we also identified several areas for future improvements. In fact, we consider hXDP a starting point and a tool to design future interfaces between operating systems/ applications and NICs/accelerators. To foster work in this direction, we make our implementations available to the research community.

Acknowledgments

The research leading to these results has received funding from the ECSEL Joint Undertaking in collaboration with the European Union’s H2020 Framework Programme (H2020/2014–2020) and National Authorities, under grant agreement n. 876967 (Project “BRAINE”).

Figure. Watch the authors discuss this work in the exclusive Communications video. https://cacm.acm.org/videos/hxdp

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike International 4.0 License.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike International 4.0 License.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment