Today's highly distributed computing frameworks introduce an array of practical and technical challenges. Yet, none is bigger than ensuring that processing takes place at exactly the right place and that the optimal level of resources is devoted to the task. Over the last few years, as the boundaries of computing have expanded and the Internet of Things (IoT) has taken shape, boosting speed and reducing latency has become paramount.

"There's a growing recognition that traditional computing approaches aren't effective or sustainable for the IoT," says Sam Bhattarai, director of technology and engagement for Toshiba America Research Institute. Even cloud and conventional edge computing, which place resources closer to the point of data processing, often have limited impact for highly distributed systems, such as those used by smart cities or specialized medical applications. In many cases, latency introduces problems and risks.

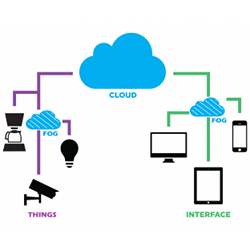

Consequently, a new concept is taking shape: fog computing. It pushes data processing through an intermediary layer of computing and communications, such as an IoT gateway or "fog node" that connects to multiple IoT devices and data points. Fog computing, through a combination of hardware and software, determines where to tackle processing for optimized performance and cost efficiency.

"The system provides computing services along the continuum from cloud to things," says Yang Yang, a professor at ShanghaiTech University and the Shanghai Institute of Microsystem and Information Technology (SIMIT) of the Chinese Academy of Sciences, and director for the Greater China Region of the OpenFog Consortium.

Beyond the Clouds

The idea of pushing processing closer to the precise point at which it is needed at any given moment is part of the natural evolution of computing, Yang says. The term "fog computing" was introduced by Cisco Systems in 2012. The goal is to extend computing capabilities from the cloud to the edge of the network in order to more efficiently process the terabytes of data generated by millions of applications daily. Today, technology providers from around the world are updating switches, routers and other gear to better fit a fog computing model, which is promoted by the OpenFog Consortium and the IEEE Standards Association.

The use cases are significant and span numerous areas and industries. The technology could help autonomous vehicles adapt to changing conditions, smart buildings operate more efficiently, improve the way logistics companies manage shipping, better integrate disparate healthcare functions, boost the delivery of video entertainment over the Internet, and even change the way agricultural firms plant and manage crops.

Mung Chiang, John A. Edwardson Dean of the College of Engineering at Purdue University, and founder of the Princeton EDGE Lab devoted to research, education and innovation in edge computing and edge networking, describes fog as a superset of edge computing.

Bhattarai says there are some important distinctions between edge computing and fog computing. Essentially, the edge relies on one-to-one connectivity between the edge machine and the cloud, while the fog relies on a variety of methods and tools, depending on the specific need and the particular requirements of the IOT and the protocols these devices use. In some cases, fog could use smaller clouds mixed with fog infrastructure; it could also reach across horizontal domains in order to reach across industrial and vertical industries and domains, he explains.

Consider a smart city scenario, where it's critical to manage traffic signals and optimize the flow of vehicles. Data may need to flow from one device or node to another—a block away or across town—in real time. "If you attempt to address the task through basic edge connectivity, the latency can become quite extensive because all the data has to go to the cloud," Bhattarai says. However, "With a fog architecture, you can have processing take place at the point it's required at the exact time it is required. It can take place in a way that best fits the computing requirements."

The Fog Lifts

Fog computing ultimately addresses a few challenges: it creates more efficient data pathways while potentially lowering complexity and costs. "There are physical constraints associated with how much data can be transferred when you have upwards of 20 billion IoT devices," Bhattarai explains. "Conventional infrastructure and bandwidth cannot address the data requirements." Similarly, it's not efficient to send all the data from all the devices and sensors to the cloud. "If you try to architect a framework to accommodate the massive data requirements, it becomes completely unaffordable," he adds.

Yang says fog computing complements emerging technology trends such as 5G mobile communications, embedded AI, and IoT tools and applications. The primary challenges for now are addressing system architecture and interoperability. "You have to manage dispersive computing resources and services properly so that data processing and decisions can take place locally. This also requires new and different interfaces and configuration," says Yang. "You want to be able to use and reuse computing resources and components. A conventional cloud environment is too centralized to provide ideal results for the IoT, especially for various delay-sensitive applications."

A market report from 451 Research predicts the fog computing market will exceed $18 billion by 2022—with the energy, transportation, healthcare, and industrial markets leading in fog adoption. Notes Christian Renaud, research director for the Internet of Things at 451 Research and lead author of the report: "It's clear that fog computing is on a growth trajectory to play a crucial role in IoT, 5G, and other advanced distributed and connected systems."

Samuel Greengard is an author and journalist based in West Linn, OR, USA.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment