Researchers at the University of Toronto have come up with a way to block facial recognition software's ability to identify faces on the Web and in other digital media using artificial intelligence.

"Personal privacy is a real issue as facial recognition becomes better and better," says Parham Aarabi, an associate professor of communications and computer engineering at the University of Toronto and a lead author on the research. "This is one way in which beneficial anti-facial-recognition systems can combat that ability."

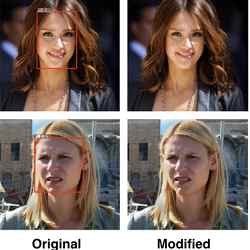

Aarabi and his colleagues have developed AI software that essentially can render a person in a photo completely unidentifiable to facial recognition software, simply by altering a few pixels. Such changes, while catastrophic to the performance of facial recognition software, are barely noticeable to the naked eye.

The prototype AI software works by pitting two AI algorithms against each other, according to Avishek Joey Bose, a graduate student at the University of Toronto and another lead author on the study. The first, a facial recognition algorithm, stays busy finding ways to match photos of faces against a known set of photographed faces that are stored in its database, Bose explains. The second, a cloaking algorithm, is tasked to find ways to frustrate the facial recognition algorithm by making subtle changes to just a few pixels in each image.

"It is essentially an adversarial AI system, with one network focused on generating alterations to an image to make the face undetectable, and another network focused on detecting faces," Aarabi says. "As the two of them train, they both become better at their intended tasks.

"They are trained together such that noise is added to a image with a face, then either the altered face or an altered random image, with or without a face, are sent to the detection network," Aarabi says. "Either it detects what is in the photo, face versus no face, or it does not, the results of which are used to reward/punish each network."

One illustration of how the digital battle plays out: should the cloaking algorithm discover that the corners of the eyes are critical reference points for the facial recognition algorithm, for example, the cloaking algorithm may alter just a few pixels at that location to render that reference point unusable.

The Toronto researchers used an industry collection of photos to run the prototype software through its paces—the 300-W face dataset —which yielded impressive results. Their anti-facial recognition software was able to render 99.5% of images in the 300-W face dataset unrecognizable to the algorithm they were attempting to frustrate.

"Ten years ago, these algorithms would have to be human-defined," Aarabi says. "But now, neural nets learn by themselves; you don't need to supply them anything except training data. In the end, they can do some really amazing things. It's a fascinating time in the field, there's enormous potential."

Of course, one limitation of the researchers' cloaking algorithm is that it can only frustrate the specific facial recognition algorithm used in the University of Toronto study. However, Bose is confident the principles discerned in the study can ultimately be applied to all variations of facial recognition software.

In fact, he's so confident, he is in the process of launching a company based on those principles called Faceshield, which will release software designed to frustrate all major versions of facial recognition software on the market. "We are currently researching methods which allow us to construct attacks in the black box setting and against multiple detectors via co-training and other gradient approximation algorithms," Bose says.

Bose also says he understands he will need to overcome another limitation of the software: the ability of facial recognition software makers to study how he's frustrating their software and then come up with ways to neutralize the digital noise he's creating.

Nicholas Carlini, a research scientist at Google Brain, agrees. "This approach will be perfectly useful for hiding from existing algorithms temporarily; I don't want my face recognized for the next week," Carlini says. "But as soon as we allow the recognition models to retrain, this approach is not going to be sufficient.

"Once I've 'committed' to a private image of myself, it's online for good," Carlini says. "Anyone is free to re-train a new classify, and try and classify this one correctly, according to my fixed strategy."

Bose agrees the cat-and-mouse game will be endless, but in his view, it will probably take much longer for facial recognition software companies to reverse-engineer Faceshield's capabilities and then distribute the upgrades to their software. "Realistically, the (Faceshield) software would need to be updated every major machine learning conference cycle, as more and more defenses are released," Bose says.

It is likely the emergence of cloaking software designed to preserve privacy in the digital world will be welcomed by many.

"I think their work is at the forefront of a new class of research that uses the limitations of modern machine learning for good," says Patrick McDaniel, director of the Institute for Network and Security Research at The Pennsylvania State University.

"Probing, as Aarabi and Bose do, the limits of machine learning will help us to understand the functional, security, and privacy challenges of future systems build on this still-opaque field of computer science," McDaniel says.

Says Carlini, "The idea of taking a flaw in neural networks and repurposing it for good is very interesting. I hope to see more work in this direction."

As for blowback from the law enforcement community, which could perceive FaceShield-like software as a direct affront to nabbing criminals and protecting the homeland, "We have not received any concerns or complaints," Aarabi says. "So far, law enforcement has approached this work with curiosity and interest, but nothing more."

Joe Dysart is an Internet speaker and business consultant based in Manhattan, NY, USA

Join the Discussion (0)

Become a Member or Sign In to Post a Comment