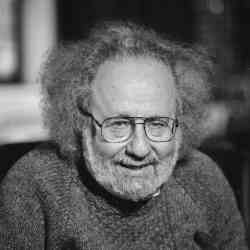

Born in 1928, Martin Davis has witnessed the development of computer science since its birth. Not long after getting his Ph.D. in mathematics from Princeton University in 1950, where his advisor was mathematician and logician Alonzo Church, Davis found himself programming the Ordnance Discrete Variable Automatic Computer (ORDVAC) at the University of Illinois. He spent most of his career at New York University (NYU), where he was one of the founding members of the computer science department.

Today, although he lives in Berkeley, CA, Davis is professor emeritus in the Department of Computer Science, and the Courant Institute of Mathematical Sciences, at NYU.

Closest to Davis's heart is mathematical logic, which provides the theoretical basis for all things computational. He has had a lifelong obsession with Hilbert's Tenth Problem (H10), which asks for an algorithm that can decide whether any Diophantine equation has a solution in the integers (a Diophantine equation is a polynomial equation with integer coefficients).

In his doctoral thesis, Davis made what was later called "Davis's daring hypothesis," which forged a link between logic and number theory. He hypothesized that the recursively enumerable sets (those whose members can be listed by an algorithm) and the Diophantine sets (those that are the range of a Diophantine function) are the same. If they were, it would immediately follow that H10 is unsolvable.

In work with mathematicians Hilary Putnam and Julia Robinson, Davis made significant advances towards confirming the daring hypothesis. That work allowed Russian mathematician and computer scientist Yuri Matiyasevich to add the final piece to the puzzle in 1970 and prove that H10 is unsolvable.

CACM spoke to Davis in the summer of 2021, a few months after his 93rd birthday. What follows is an edited version of the conversation.

You are a skeptic of the conjecture that P is different from NP. Can you tell me about that?

People think of the class NP as sort of analogous to the recursively enumerable sets. The analogy is based on assuming that polynomial-time computability is an analog of computability, that polynomial-time computability is feasible computability. Why would you believe that? It's certainly not true in any reasonable sense. If you have a polynomial bound of high degree with a big numerical coefficient, it's not particularly computationally feasible at all. The class has good mathematical closure properties. It's certainly an interesting class, but why identify it with feasibility?

There are exponential-time algorithms that, in the realm where they are actually employed, are quite useful and work well. When I give talks on this, I quote the results of Margaret Wright. At first it was thought that linear programming was not polynomial-time. It was considered a big breakthrough when a polynomial-time algorithm for linear programming was found, but that algorithm didn't work very well! As Margaret Wright showed, the simplex method, which is exponential in the worst cases, is better and faster for many cases.

Part of my skepticism also relates to my experience with Hilbert's Tenth Problem, where it was very clear that people have no intuition at all about polynomials with high degree.

By the way, I don't know what his reasoning is, but Donald Knuth agrees with me that it's by no means an open and shut case that P and NP are different. I say I would bet 50-50.

What about NP-complete problems?

I think NP-complete problems are hard problems, no doubt about it. I don't imagine that anyone is going to find a nice, cute, fast algorithm for any NP-complete problem. That doesn't mean that they can't find a polynomial-time algorithm, maybe just not a very feasible one. Always lurking in the back of these heuristic arguments is the idea that "polynomial-time" and "feasible" are the same thing.

What would be a better way to define feasibility?

[laughs]

I guess if you had one, you'd be writing a paper on it.

Right. It's not clear that there is a very precise notion that you could lay your fingers on. It may be that some algorithms are harder than others, and there is just a range.

Also, what is feasible depends partly on what computer facilities you have available. For my book The Universal Computer1, I wanted to illustrate the idea of convergence with the number π. So I wrote a program that used Leibniz's series π/ 4 = 1 – 1/3 + 1/5 – 1/7 … and computed it to maybe 20,000 terms. Not so long ago, the idea of computing π by adding up 20,000 terms in Leibniz's series would have seemed utterly silly. And yet, it was a thing that an amateur—as I am when it comes to numerical computation—could easily do with a tool he had at home and a little knowledge of how to write a computer program.

You mentioned your book, The Universal Computer. In the 2018 edition, you added some new material on machine learning and artificial intelligence. What has surprised you most about machine learning?

That these neural net models were so useful and that they could work so well. I'd been a skeptic of neural nets for many years. The original idea was that we are mimicking brains. And I thought, 'It's just another model, there's no particular virtue to it'. But in fact, for some problems, like winning at the game Go, it works astonishingly well. There, my intuition was totally wrong.

There is no theory of why machine learning works so well. Do you think there ever will be such a theory?

I don't think it's so mysterious. It is a convergence process, a successive approximation, the sort of thing that has been used for years in analysis. It converges rapidly if you pick the right function in building the multi-level neural net, so I don't think there is a special deep theory involved. I suspect that we really are mimicking nature.

To become a virtuoso pianist, you practice seven hours a day. Why can't you just read a manual that tells you, "to be a virtuoso pianist, this is what you have to do"? That doesn't work. You sit at the piano, you go to a teacher—even if you are a professional—who watches what you do and says, "No, your pinky was off by a tiny amount." It's a convergence process.

Right now, artificial intelligence is exploding in terms of research output and its uses in society. Is this something positive, or something to be feared?

I approach the question this way: Can we make an automaton that can do all the things we do and maybe do them better? With difficult games like Go and chess, yes, we can. You can't beat the machines anymore.

This relates to the question of how we do these things ourselves, and we really don't know. Does the brain actually carry out algorithms? It certainly does things that, when we make an automaton do them, we use algorithms. Our brain obviously carries out searches. We try to remember a piece of information, and it doesn't pop right up, but we wait a while and then suddenly it pops up. And certainly, we know that universal computation doesn't need much. Stephen Wolfram has made almost a cult religion out of the fact that universal computation is available in very small entities. If, in fact, the brain does all these wonderful things by executing algorithms, then of course we are going to be able to make computers that do the same thing.

There is a guy at Rice University who has discussed this. What's his name…?

Moshe Vardi?

Yes. See, you just did a search! He says there is no doubt that in a generation, we will have machines that can do anything that people can do. That may be a little over-optimistic.

If you look at the stunning accomplishments of these neural nets, the one thing they don't do is come up with new ideas. The question is to what extent there is some higher-level thing going on in our brains that is just, as it were, a different technology altogether. I don't think so. I suspect it's all more of the same, at another level. After all, we get to be good mathematicians by doing a lot of mathematics.

Then how do you account for the insights or leaps of imagination that great mathematicians make when they perceive a new structure, or connect two things that seemed very different? You did that with Hilbert's Tenth Problem, when you thought that the recursively enumerable sets and the Diophantine sets could be the same thing.

Well, after all, one was a subset of the other, so it wasn't like connecting two entirely different things. It was more like extending the reach of something that looked like it was very limited. It was a boy's enthusiasm as much as anything! I certainly wasn't convinced it was true in any ironclad way; I just didn't think it was as crazy as most people did.

But it was thinking outside the box, thinking beyond what you were taught. If the brain is really just taking in and synthesizing information, how do you account for such leaps?

How our brain does it, of course I have no idea. But it's clearly a useful survival skill. It's one of the things that has made human society possible. At some point somebody said, "You know, fire isn't just something awful that burns us. It makes food better."

What about Mozart writing a symphony? Computers haven't been able to do something like that.

Well, they have composed music. They haven't composed anything I'd like to listen to! But Mozarts are very rare. Like any composer, Mozart honed his craft, and his brain was somehow hooked up in a very special way that made musical ideas come together. We have no idea how that happened. We certainly don't know how to make Mozarts. But we might, one day, know how.

So you think even that would be possible.

I don't know any reason why it should be impossible. This leads to the old mind-body issue. If you asked Kurt Gödel, he would say it's ridiculous to think that protoplasm is doing all this. He believed the mind is some abstract thing that is out and beyond, that the mind makes use of the brain but the brain doesn't produce the mind. On the other hand, Marvin Minsky says the mind is what the brain does.

The triumphs of 20th century biology have undermined the case for vitalism, which is the philosophical position that the properties of living things cannot be explained in terms of the usual laws of physics and chemistry. Gödel was a vitalist with respect to mental phenomena, a view that can still be coherently maintained in the present state of knowledge in neuroscience.

Allyn Jackson is a journalist specializing in science and mathematics, who is based in Germany.

[1] The Universal Computer: The Road from Leibniz to Turing, third edition 2018, CRC Press.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment